The Raspberry Pi has a port for a camera connector, allowing it to capture 1080p video and stream it to a network without having to deal with the craziness of webcams and the improbability of capturing 1080p video over USB. The Raspberry Pi compute module is a little more advanced; it breaks out two camera connectors, theoretically giving the Raspberry Pi stereo vision and depth mapping. [David Barker] put a compute module and two cameras together making this build a reality.

The use of stereo vision for computer vision and robotics research has been around much longer than other methods of depth mapping like a repurposed Kinect, but so far the hardware to do this has been a little hard to come by. You need two cameras, obviously, but the software techniques are well understood in the relevant literature.

[David] connected two cameras to a Pi compute module and implemented three different versions of the software techniques: one in Python and NumPy, running on an 3GHz x86 box, a version in C, running on x86 and the Pi’s ARM core, and another in assembler for the VideoCore on the Pi. Assembly is the way to go here – on the x86 platform, Python could do the parallax computations in 63 seconds, and C could manage it in 56 milliseconds. On the Pi, C took 1 second, and the VideoCore took 90 milliseconds. This translates to a frame rate of about 12FPS on the Pi, more than enough for some very, very interesting robotics work.

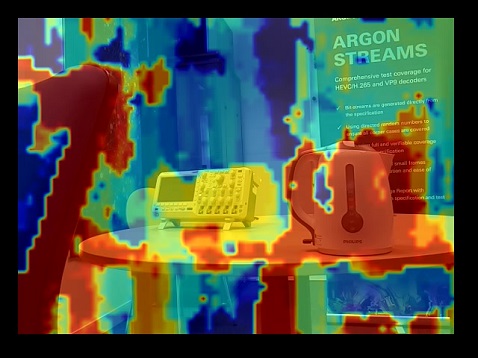

There are some better pictures of what this setup can do over on the Raspi blog. We couldn’t find a link to the software that made this possible, so if anyone has a link, drop it in the comments.