We will all be used to malicious software, computers and operating systems compromised by viruses, worms, or Trojans. It has become a fact of life, and a whole industry of virus checking software exists to help users defend against it.

Underlying our concerns about malicious software is an assumption that the hardware is inviolate, the computer itself can not be inherently compromised. It’s a false one though, as it is perfectly possible for a processor or other integrated circuit to have a malicious function included in its fabrication. You might think that such functions would not be included by a reputable chip manufacturer, and you’d be right. Unfortunately though because the high cost of chip fabrication means that the semiconductor industry is a web of third-party fabrication houses, there are many opportunities during which extra components can be inserted before the chips are manufactured. University of Michigan researchers have produced a paper on the subject (PDF) detailing a particularly clever attack on a processor that minimizes the number of components required through clever use of a FET gate in a capacitive charge pump.

On-chip backdoors have to be physically stealthy, difficult to trigger accidentally, and easy to trigger by those in the know. Their designers will find a line that changes logic state rarely, and enact a counter on it such that when they trigger it to change state a certain number of times that would never happen accidentally, the exploit is triggered. In the past these counters have been traditional logic circuitry, an effective approach but one that leaves a significant footprint of extra components on the chip for which space must be found, and which can become obvious when the chip is inspected through a microscope.

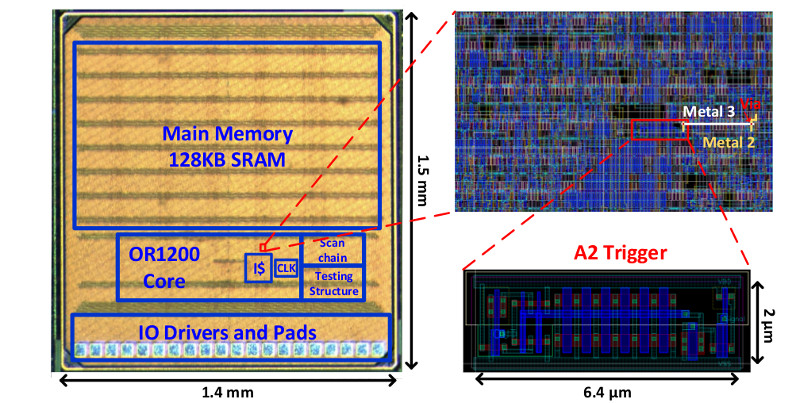

The University of Michigan backdoor is not a counter but an analog charge pump. Every time its input is toggled, a small amount of charge is stored on the capacitor formed by the gate of a transistor, and eventually its voltage reaches a logic level such that an attack circuit can be triggered. They attached it to the divide-by-zero flag line of an OR1200 open-source processor, from which they could easily trigger it by repeatedly dividing by zero. The beauty of this circuit is both that it uses very few components so can hide more easily, and that the charge leaks away with time so it can not persist in a state likely to be accidentally triggered.

The best hardware hacks are those that are simple, novel, and push a device into doing something it would not otherwise have done. This one has all that, for which we take our hats off to the Michigan team.

If this subject interests you, you might like to take a look at a previous Hackaday Prize finalist: ChipWhisperer.

[Thanks to our colleague Jack via Wired]

This is just one more reason why we need make IC fabrication tools that are inexpensive, so that you can make your own chips if you want. I know it’s a slow, complex and high precision process but I’m not looking to make a large number of chip that can compete with the latest x86 chip. It’s something I’m interested in seeing happen.

Better yet start to move fabrication back to USA… Most of the cost is capital expenditure anyway.

That is only part of it (from what I understand). The Chinese government actually helps the semiconductor industry, and the cost of labor is lower over there.

Is there really a lot of human labor involved in semiconductor fabrication? It seems like it’s all robots and clean rooms. No place for unskilled labor.

Labor is a minor cost in semiconductor manufacturing and it’s very automated anyway so moving the fabs back to the US would have a minimal impact on customers who live in countries that have a fair trade agreements with it.

It literally would only be a few cents per chip difference in cost which would be a small price to pay for more piece of mind.

Of course you’d still have issues with the NSA and CIA but they have no where near the amount of political power power in the US that the 3PLA ans MSS do in China.

Now assembly of electronics devices on the other hand can be labor intensive so US assembly might drive up the cost of low end devices noticeably though high profit margin goods like iphones could easily absorb the added cost and still have insane profit margins at the same prices.

LOL, that’s actually funny.

Considering how capitalistic the people in country are (CEOs who regularly do ethically / morally / borderline illegal (see Uber spying on competitors by fake ride requests, see 90% of the current American gov’t, see Health Care costs), what makes you think Americans wouldn’t INTENTIONALLY put this in there?

This.

Plus, US made processors would probably have DRM and subscription built in.

“Welcome to your new $500 processor. Now select your subscription: For only $25/m you can purchase 5 million instruction executions…”

That would not make the chips more trustworthy. In the USA the NSA can order you anything including the order to be silent about it. Why should I trust the USA more than China? Perhaps it would help to move “back” to Europe, we don’t have that omnipotent spy agencies. The spy here but at least we can not be ordered to keep silence if we notice it.

Britain

A while back you could draw up a schematic with 7400 series parts and RCA would make you a chip.

Thinking about everything involved, maybe not the single crystal wafers, you could probably fab 50 year old silicon, maybe even 40 old. There is a reason that most fab plants are located in, ideally remote, geographical locations with large granite bedrock. But if you were not aiming at a 10 nm process technology, but were instead aiming at 10000 nm process (0.01 mm), it is probably possible. But power requirements would be much higher. Cooling is kind of interesting, the larger area would help reduce cooling requirements, but you would still have I^2 x R, with a bigger currents and lower resistances. The bigger currents would be due to having physically larger components, unless you drop the clock speed. It takes a lot of power to charge up a physically large capacitor in the same time as it would to charge up a tiny one.

You can buy 6″ single side polished, single crystal wafers, from China.

“we need make IC fabrication tools that are inexpensive”

I agree completely. Get on it immediately.

The design tool can be had but as you state the fab is another beast. There are shuttle runs that allow you to piggy back many other chips on the same wafer. This distributes the cost across the various users willing to run the process. Still not exactly low cost and only makes sense if you want to commercialize something you have designed that has been thoroughly simulated.

Seems like refining this fine lady’s process might lead somewhere:

http://hackaday.com/2010/03/10/jeri-makes-integrated-circuits/

Why you should make your own chip, if you are not actually a company? I mean under the pratical point of view.

If you referring to learn, it can be done without actually doing physically a chip. This kind of field of study never starts from hands-on tinkering, it simply does not make sense, due to the complexities that are requiring a proper background.

Maybe FPGA and open processor cores. Definitely not the latest and the greatest, but you know what you have in. https://opencores.org/

So, the lesson is, as always, “never trust”.

“Reflections on Trusting Trust”, indeed.

Intel has a “Management Engine” that is a hardware level backdoor:

http://www.techrepublic.com/article/is-the-intel-management-engine-a-backdoor/

It’s safe to assume every chip has something similar, whether placed there knowingly, or by shadowy operatives

Not true at all. If you stick to big fabs, they have a reputation to uphold. If a backdoor is *FOUND* in silicon that they made, their reputation is ruined and people will avoid them in the future, sinking the business.

Plus, it takes time and effort to put a backdoor in something. Fabs don’t get netlists — they get GDS files, which is kind of like a gerber file for boards (but without the helpful text of a silk screen). In order to attack a chip (in the example of the “divide by zero” flag), you first have to identify which line is divide by zero — not an easy task when you have millions of nets. Then, you have to have the attack do something useful, such as go to supervisor mode (which means that you have to find that wire too).

This is certainly possible given an attacker with enough resources and time, but it is far from easy.

This kind of thing could not happen at a place like Intel, as they own their own fabs and don’t use places like TSMC or UMC.

Thanks for your response, I didn’t really know how this worked in the “real world,” and your credentials are noteworthy.

This is definitely only in the realm of a nation/state level actor. That being said, application of Occam’s Razor says that no OS/browser/program is completely secure, so why would they invest hundreds of thousands of hours and significant manpower to making a viable hardware backdoor when they can just use the latest 0-day and own the entire box that way?

Because a hardware backdoor is persistent, virtually undetectable, and impossible to remove. Security holes in software can be patched, but you can’t do that to hardware.

While this isn’t technically a hardware exploit, Sprite_tm has pulled off a firmware hack on hard drives and shown how useful it can be to an attacker (https://spritesmods.com/?art=hddhack). Now imagine a backdoor that can’t be fixed by a firmware upload.

Ejem. https://plus.google.com/117091380454742934025/posts/SDcoemc9V3J

https://en.wikipedia.org/wiki/RdRand#Reception

In September 2013, in response to a New York Times article revealing the NSA’s effort to weaken encryption, Theodore Ts’o publicly posted concerning the use of RdRand for /dev/random in the Linux kernel:[21]

Are you sure?

RdRand is pseudo random and has been crunched to find its natural backdoors! For gaming that’s nothing to worry about, for encryption, get a hardware RNG. Low output ones can be diy’d. Open source, higher output ones can be bought.

I saw this paper (or a VERY similar one) at least a year ago, perhaps even 2…

?

1) On arguing reputation, history repeats itself and as long as reputation can be restored trust will be abused. Possible means of restoring trust: shifting blame, flat-out denial, obfuscated impossibility “proofs”, lack of a provably trustworthy competitor to switch to, etc …

2) Given the masks its straightforward to recover the logic in terms of gates, given the logical gates and the IO pads, the processor can be emulated by giving it instructions, some instructions require supervisor privilege, so what you do is this: you emulate the state machine recovered from the mask files and run 2 programs:

A)

starting in supervisor privilege level from boot

run instruction that requires supervisor privilege level

B)

starting in supervisor privilege level from boot

lower privilege level << ADDED

run same instruction that requires supervisor privilege level

by comparing the 2 evolutions:

from the emulated voltages on all lines you will have drastically narrowed down the candidate lines that contain the supervisor state bit(s), before the final instruction is executed, save the state of the processor and then for each line of the narrowed down list: toggle that line alone, and let the processor continue

similarly you can find and identify the nodes that correspond to other state variables in the finite state machine..

The hard part is not the identification, but considering and comparing all the bits whose conductors pass close by the conductor of this privilege bit to select which activation signal would be best to use…

"This kind of thing could not happen at a place like Intel, as they own their own fabs and don’t use places like TSMC or UMC."

Thanks for restoring our trust already :)

Only if you redefine what a backdoor is. And the ME is mostly software.

Intel could use it as a backdoor, it would effectively kill them if they did though. Could other entities do that? Well theoretically, the US government could force Intel to do it but likely wouldn’t. Killing an important innovator, manufacturer, sponsor of research, exporter of goods and job provider isn’t a good idea.

Others then? Intel uses a multi-level security model with encryption and verification in hardware (and probably software) with a tightly controlled key infrastructure. Over that they add a security by obscurity level (which is proper if not used alone) in that the security features aren’t discussed. What is known of the security design is by reverse engineering – which haven’t detected any weaknesses BTW.

Why would the NSA need to “kill” Intel to get at the IME? For all we know, Intel’s high-ups and the Men In Black are all in the same sinister club together. The rich and the powerful often have the same interests and goals, or at least have many ways in which they can come to an arrangement.

More than that, what’s the legitimate use for the IME? Why does it need to be so powerful? Nobody could ask for a better backdoor. And if they’ve gone to the trouble of including it, somebody probably has asked.

It would only “kill” Intel if people found out about it, and had undeniable proof. The public could be easily made to accept it, with some lubricating bullshit about terrorism. And even if they didn’t, what are they going to do, buy PC CPUs that don’t have backdoors? Who from?

Currently, by it’s nature, computer hardware is something that only billion-dollar companies can do, and generally speaking giant corporations, and ordinary people, have their interests aligned in different directions.

Intel and AMD both have what could be termed “backdoor” CPU’s

https://libreboot.org/faq.html#amd

https://libreboot.org/faq.html#intel

But the real purpose of these processors is to basically lie. Under the hood neither CPU actually runs the x86/x64 instruction set, it is too inefficient, the CPU is there to tell you what you were sold is what is inside the actual package. Lets say that a fab only makes 32 core CPU’s with a 90% failure rate, they can then sell these failed as perfectly functioning 8 core processors. To do this they need some device that (1) the user can not modify and (2) can be patched later.

There is a very good CCC talk from 2015 called “32c3 7171 en de When hardware must just work” (a search engine is your friend) that indirectly explains why these secret non usable CPU’s exist. Their core function is to generate profit. Can they be used by spy agencies, on paper yes, in reality for US based companies *ponder*.

No, the “management engine” isn’t a normally-accessible CPU. It’s specs are secret (though AMD’s is supposed to be an ARM I think I heard), so nobody writes code for it apart from Intel / AMD. Nobody can tell what it’s doing, and it has access to all hardware, so could easily be programmed to, say, accept incoming commands through the network, and return dumps of any chunk of memory you like. It’s utterly a backdoor, it’s a backdoor with a big red carpet and complementary tea or coffee.

That’s nothing to do with them selling failed 8-cores as 4-cores, etc. That’s been going on since overclocking was first invented, selling failed 166MHz chips as 133MHz chips. Except the yields got too good, they had too many of the expensive 166s, so they marked them down and sold them as mid-range 133s. People noticed this and started trying higher clocks.

But selling failed 8-cores as 4-cores doesn’t require a full CPU with full hardware access. You can do it just by lasering off a couple of links, or a couple of bits of PROM, or whatever. The “management engine” is nothing to do with that. Two separate issues.

Your other point about modern CPUs emulating x86 instructions is relevant to neither of those, so it’s a third! It also goes back years, to the Nexgen NX586, which I think Cyrix bought up, way back when. Was a thing in Pentiums for a few years. I had heard that, since they’d hit speed limits, but had more and more transistors available, that they’d gone back to native execution. When clock speed is your limit, CISC is better. Normally it’s CISC architecture that limits clock speed in the first place, but now it’s physical limits.

Maybe they’ve switched back since then, I dunno.

I never said that it was a “normally-accessible CPU”, it is only accessible to Intel, AMD and because both are US companies any secret FISA court orders.

PROM does not fit the bill, too easily hacked, and if you make the PROM complex enough to validate security certificates, it eventually starts to look more like a hidden CPU. And laser cutting tracks would be similar to fixing a PCB by cutting tracks, it is better than nothing but functionality similar to a FPGA could mean higher yields would be a better solution. And when you have spent $3 million to get the first chip off the line, more low cost flexibility on getting the actual product to ship vs dumping and spending another $3 million on a v2 of the chip.

I’m not saying that they are good, I’d like them gone as well, but I can see why they exist. In Privacy vs Profit, both companies choose profit.

Another reason to keep things simple ™ so that trickery can be detected. Once you are up above few million gates, good luck.

Yah I kind favor using as simple of a circuit to get the job done as possible.

If you only need an 8bit uC to do something then go with it.

Usually bad things happen when you attempt to divide by zero…

Cool hack.

Wonder how common this backdoor scheme is. Is it really everywhere!?

“Black holes are where God is dividing by zero”

Only without calculus.

I think it is nowhere, it is a proof of concept

Wow, hardware attacks are so much more impressive!

I argued this point when they were talking about voting machines. It would be so simple to put these types of exploits into the voting machines – ones that could be triggered without direct access to the machine – for example, sending a signal into the power line of a building with voting machines in it. At that point the sky is the limit. And considering that a Person involved with writing exploits into the machines testified as such in front of Congress, it is likely these things exist in many things were are not aware of.

It’s insane you even HAVE voting machines, never mind ones that are pretty likely to be corrupt. With hand counting of paper ballot slips, if someone tampers with the results they can be found out and arrested. Each batch can be counted by more than one person to check for it.

The old fashioned way gets Britain results within a few hours.

It’s depressing that somebody can testify to Congress that “yep, we might’ve rigged the election a little bit”, and everyone carries on as usual. Modern politics in some rich countries is unbelievably corrupt.

Yeap, as I recall the Person testifying said they could swing any election by 1 to 2 points without anyone knowing. That’s a comforting thought. Didn’t Al Franken win on a “recount”? It’s funny, every time they do a recount the count becomes just a little bit closer. Unless you’re Detroit – then you run the ballots through 2 or 3 times./s

https://www.youtube.com/watch?v=VJsMLPGg4U4

Not sure if you could test for this sort of thing with formal verification tools, but this open-source verification tool might be applicable for finding these type of undesirable states if you could properly specify / describe the glitch properly.

This is an issue with open source silicon. Since anyone with a chip fab capable of the required process can make copies of the chips, any of them could add extra stuff and the buyers will be none the wiser as long as the chips work as designed.

This is no different from using pre-compiled binaries of open source software. Would you trust the Debian project? What about smaller, potentially less tightly run software repositories, like Ubuntu’s PPAs? Any of it could be comprised somehow…

Always compile from source, on hardware made from relays you wound yourself, from copper you refined and mined yourself, from land you own, and make sure nobody’s watching you when you sign the deed. Then just do it on paper anyway, it’s too risky.

“You might think that such functions would not be included by a reputable chip manufacturer, and you’d be right.”

That is unsubstantiated and logically flawed, they are only reputable because they have not been busted doing it, if they are doing it, and there is no validation method available for the current generation of billion plus transistor chips to prove the point either way. All it would take is a mod to the instruction pipe-line area to recognise a long instruction-data string that acted as a key to activate an undocumented feature, a key that was long enough and improbable enough (not likely to be output from a sane compiler) to avoid being accidentally triggered or even found by brute force methods.

True, but the weirder a sequence you need to unlock your backdoor, the more likely the circuitry is to show up to anyone investigating. Unfortunately it’s still probably impossible, you’d need some sort of atomic force microscope to even see the transistors, and then a giganormous supercomputer to try decipher what’s actually going on, to extrapolate higher function from those transistors. And the software to do so, which I’m pretty sure doesn’t exist. And the money, and the motivation. And that’s for each chip.

This paper is just a suggestion for a subtle kind of backdoor. You don’t need it, since more complex backdoors are unlikely ever to be detected, since nobody’s looking for them. And besides all that, all x86 chips have an on-purpose backdoor straight from the factory.

If anything does come from this new idea, it’s blackhats who benefit. Having a new thing to look out for, which we’re helpfully told is almost invisible, and besides that nobody’s even looking, isn’t going to help security much. At least with software vulnerabilities they can be patched quickly. The only response to this can be “oh, shit.”

[ “You might think that such functions would not be included by a reputable chip manufacturer, and you’d be right.”

That is unsubstantiated and logically flawed, they are only reputable because they have not been busted doing it, if they are doing it, and there is no validation method available for the current generation of billion plus transistor chips to prove the point either way. ]

So now we are saying everyone is a crook, even if we can’t prove it? An honest mistake in silicon (aka BUG) must actually be interpreted as malicious now? Should we bring every IC manufacturer who posts Erratta sheets about their products to criminal court?

Do we now consider that everyone is a criminal because they just haven’t been caught? So you are a criminal, in your own home and you only get to stay their because you haven’t been caught doing crimes yet… but of course… you are doing crimes… right? Everyone does! Is that what this is all leading to? Sheesh,

Also. maybe we should take off the conspiracy hats. We have governments that can barely agree on healthcare, taxation and spending policies and we assume they are organized enough to make sure everything has a proper backdoor? Ummm… I think more proof is needed before we assume that level of cooperation.

There are more intelligent options to deal with this very real paradox, it is telling that you are incapable of listing any of them, even the ones already discussed on HAD.