A well-known property of wall warts like power bricks and USB chargers is that they always consume some amount of power even when there’s no connected device drawing power from them. This feels rather wasteful when you have a gaggle of USB chargers constantly plugged in, especially on a nation-sized scale. This is where a new USB-C wall charger by Belkin, the BoostCharger Pro, is interesting, as it claims ‘zero standby power’, which sounds pretty boastful and rather suspect. Fortunately, [Denki Otaku] saw fit to put one to the test and even tear one down to inspect the work of Belkin’s engineers.

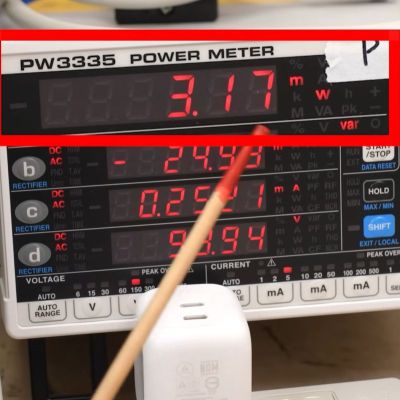

Naturally, no laws of physics were harmed in the construction of the device, as ‘zero standby power’ translated from marketing speak simply means ‘very low standby power usage’, or about 3 milliwatt with 0.3 mA at the applied 100 VAC.

Fascinatingly, plugging in an e-marker equipped USB-C cable with no device on the other end caused this standby usage to increase to about 30 mW, clearly disabling the ‘zero standby’ feature. With that detail noted, it was time to tear down the charger, revealing its four PCBs.

Continue reading “How A Belkin USB Charger Pulls Off A 3 Milliwatt Standby Usage”