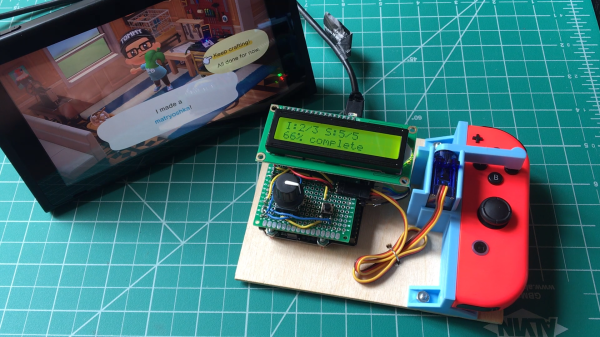

Press button, wait, press button again, repeat. There must be a better way! If that kind of interaction drives you nuts, you’ll probably appreciate [Tommy]’s buttonpusher, which has only one job: automate away some of the more boring parts of Nintendo’s Animal Crossing. On one hand the job the device does is very simple: press a button on the Nintendo joy-con in a preprogrammed pattern. There’s no feedback loop, it just dumbly presses and waits. But there are still quite a few interesting bits to this build.

For one thing, [Tommy] discovered that the little 9g RC servo can reliably exert enough force to press the button on the joy-con with the right adapter. He had assumed the servo would be too weak to do the job without a greater mechanical advantage, but a simple hammer-style actuator that attaches to the servo horn easily does the job. Well, it does as long as the servo and joy-con are held rigidly; his first version allowed a little too much wiggle in how well the parts were held, and button presses didn’t quite register. With a 3D-printed fixture to rigidly mount both the servo and the joy-con, things were fine.

In the process of making buttonpusher, which uses CircuitPython, [Tommy] created a tool to automate away another pesky task he was running into: circuitpython_tools was created to automatically watch for code changes, convert the .py files into (smaller) MicroPython bytecode .mpy files, then automatically deploy to the board. This saved [Tommy] a lot of time and hassle during development, but it was only necessary because he quickly ran out of memory on his M0 Metro Express board, and couldn’t fit his code in any other way.

Still, it’s a good example of how one project can sometimes spawn others, and lead to all kinds of lessons learned. You can see buttonpusher automate the crafting process in Animal Crossing in the video, embedded below.

Continue reading “Buttonpusher Automates Animal Crossing Tasks”