Consider the complexity of the appendages sitting at the end of your arms. The human hands contain over a quarter of the entire complement of bones in the body, use dozens of muscles both in the hand itself and extending up the forearm, and are capable of almost infinite variance in the movements they can create. They are exquisite machines.

And yet when it comes to virtual reality, most simulations treat the hands like inert blobs. That may be partly due to their complexity; doing motion capture from so many joints can be computationally challenging. But this pressure-sensitive hand motion capture rig aims to change that. The product of an undergraduate project by [Leslie], [Hunter], and [Matthew], the idea was to provide an economical and effective way to capture gestures for virtual reality simulators, which generally focus on capturing large motions from the whole body.

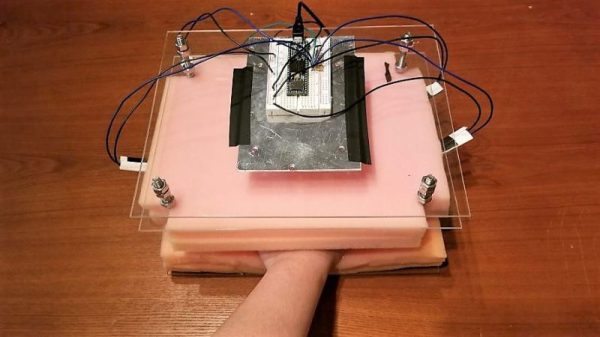

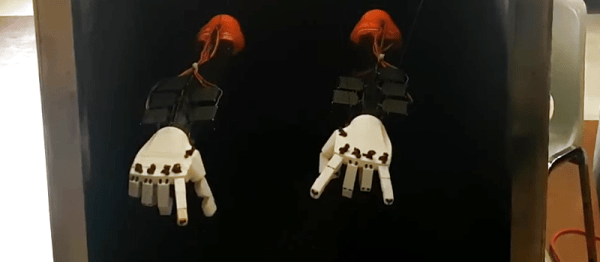

The sensor consists of a sandwich of polyurethane foam with strain gauge sensors embedded within. The user slips his or her hand into the foam and rests the fingers on the sensors. A Teensy and twenty lines of code translate finger motions within the sandwich into five axes of joystick movement, which is then sent to Unreal Engine, where finger motions were translated to a 3D-model of a hand to play a VR game of “Rock, Paper, Scissors.”

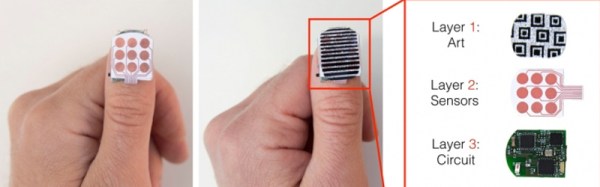

[Leslie] and her colleagues have a way to go on this; testers complained that the flat hand posture was unnatural, and that the foam heated things up quickly. Maybe something more along the lines of these gesture-capturing gloves would work?