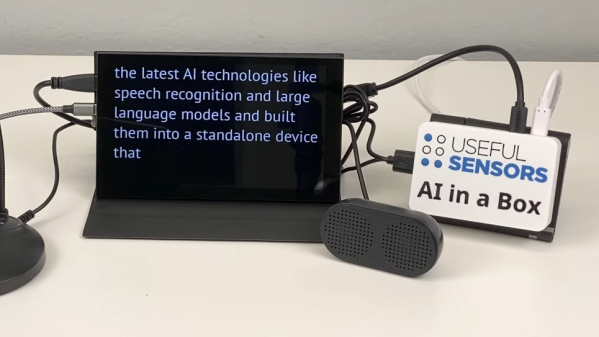

[Useful Sensors] aims to embed a variety of complementary AI tools into a small, private, self-contained module with no internet connection with AI in a Box. It can do live voice recognition and captioning, live translation, and natural language conversational interaction with a local large language model (LLM). Intriguingly, it’s specifically designed with features to make it hack-friendly, such as the ability to act as a voice keyboard by sending live transcribed audio as keystrokes over USB.

Right now it’s wrapping up a pre-order phase, and aims to ship units around the end of January 2024. The project is based around the RockChip 3588S SoC and is open source (GitHub repository), but since it’s still in development, there’s not a whole lot visible in the repository yet. However, a key part of getting good performance is [Useful Sensors]’s own transformers library for the RockChip NPU (neural processing unit).

The ability to perform things like high quality local voice recognition and run locally-hosted LLMs like LLaMa have gotten a massive boost thanks to recent advances in machine learning, and it looks like this project aims to tie them together in a self-contained package.

Perhaps private digital assistants can become more useful when users can have the freedom to modify and integrate them as they see fit. Digital assistants hosted by the big tech companies are often frustrating, and others have observed that this is ultimately because they primarily exist to serve their makers more than they help users.

Continue reading “AI In A Box Envisions AI As A Private, Offline, Hackable Module”