Weekends can be busy for a lot of us, but sometimes you have one gloriously free and full of possibilities. If that’s you, you might consider taking a gander at [Peter Shirley]’s e-book “Learning Raytracing in One Weekend”.

This is very much a zero-to-hero kind of class: it starts out defining the PPM image format, which is easy to create and manipulate using nearly any language. The book uses C++, but as [Peter] points out in the introduction, you don’t have to follow along in that language; there won’t be anything unique to C++ you couldn’t implement in your language of choice.

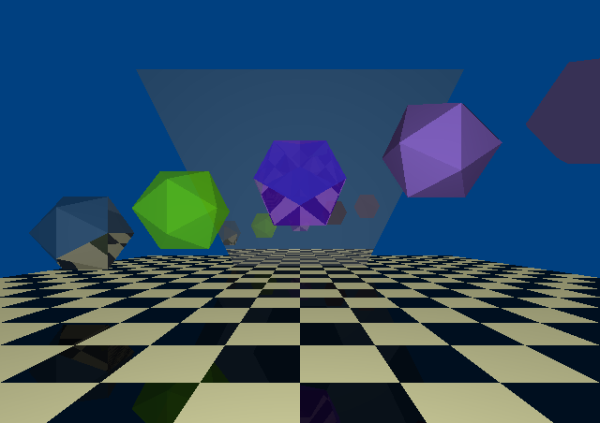

There are many types of ray tracers. Technically, what you should end up with after the weekend ends is a path tracer. You won’t be replacing the Blender Cycles renderer with your weekend’s work, but you get some nice images and a place to build from. [Peter] manages to cram a lot of topics into a weekend, including diffuse materials, metals, dialectrics, diffraction, and camera classes with simple lens effects.

If you find yourself with slightly more time, [Peter] has you covered. He’s also released books on “Raytracing: The Next Week.” If you have a lot more time, then check out his third book, “Raytracing: The Rest of Your Life.”

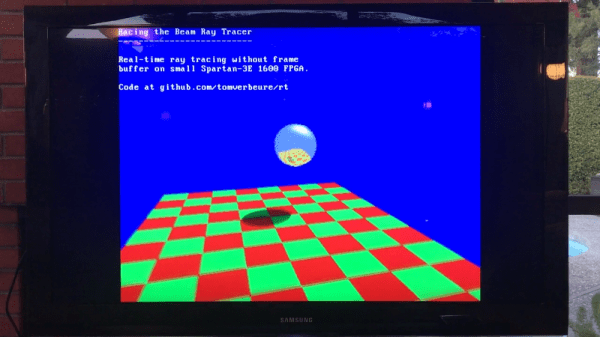

This weekend e-book shows that ray-tracing doesn’t have to be the darkest of occult sciences; it doesn’t need oodles of hardware, either. Even an Arduino can do it..