Finding that his recently purchased LED Christmas lights defaulted to an annoying blinking pattern that took a ridiculous seven button presses to disable each time they were powered up, [Matthew Millman] decided to build a new power supply that keeps things nice and simple. In his words, the goal was to enable “all lights on, no blinking or patterns of any sort”.

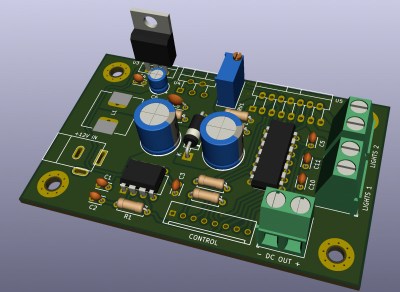

Connecting the existing power supply to his oscilloscope, [Matthew] found the stock “steady on” setting was a 72 VAC peak-to-peak square wave at about 500 Hz. To recreate this, he essentially needed to find a 36 VDC power supply and swap the polarity back and forth at the same frequency. In the end the closest thing he could find in the parts bin was a HP printer power supply that put out 30 volts, so the lights aren’t quite as bright as they were before, but at least they aren’t blinking.

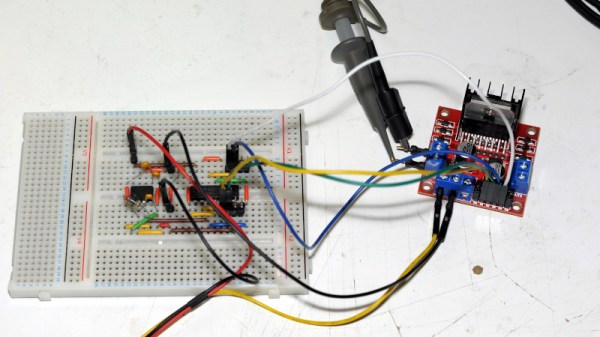

To turn that into a pair of AC square waves, the power supply is connected to a common L298 H-Bridge module. You might expect a microcontroller to show up at this point, but [Matthew] went old school, and created his two alternating 500 Hz square waves with a 555 timer and a 74HC74D dual flip-flop.

To turn that into a pair of AC square waves, the power supply is connected to a common L298 H-Bridge module. You might expect a microcontroller to show up at this point, but [Matthew] went old school, and created his two alternating 500 Hz square waves with a 555 timer and a 74HC74D dual flip-flop.

Unfortunately, he didn’t have the time to get a custom PCB made before Santa’s big night. Though as he points out, since legitimate L298s are backordered well into next year anyway, having the board in hand wouldn’t have helped much. The end result is that the circuit has to live on a breadboard for the current holiday season, but hopefully around this time next year we’ll get a chance to see the final product.