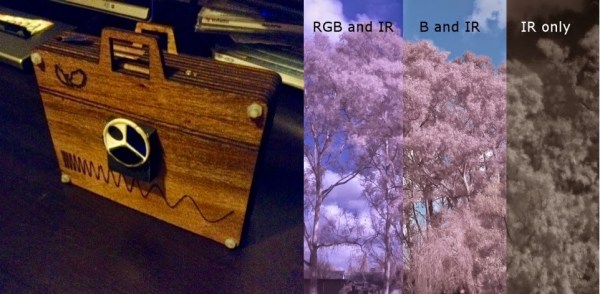

For [Peter Le Roux’] first “real” electronics project, he decided to make a camera based off the venerable Raspberry Pi platform. But he didn’t just want a regular camera, he wanted something that could shoot in near IR wave lengths…

It’s a well-known fact that you can remove the IR blocking filter from most cameras to create a quasi IR camera hack – heck, that hack has been around nearly as long as we have! The problem is even if you let the IR light into the camera’s sensor, you still get all the other light unless you have some kind of filter. There are different ways of doing this, so [Peter] decided to do them all with an adjustable wheel to flip through all the different filters.

He designed the case after the PiBow enclosure – you can see our full Pi Case Roundup here – and had it all laser cut out of wood. Stick around after the break to see a nice explanation of the light spectrum and the various filters [Peter] uses.

Continue reading “Camera Mod Lets This Raspberry Pi Shoot In Different Spectrums”

This project is

This project is