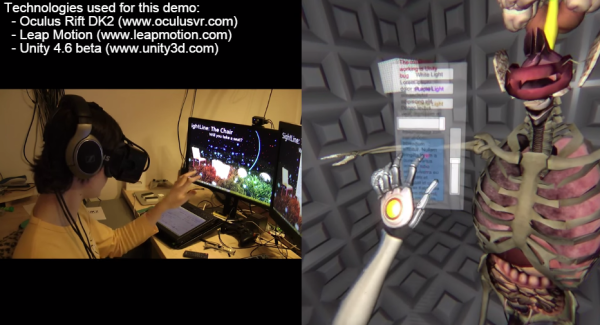

It isn’t uncommon to see a robot hand-controlled with a glove to mimic a user’s motion. [All Parts Combined] has a different method. Using a Leap Motion controller, he can record hand motions with no glove and then play them back to the robot hand at will. You can see the project in the video, below.

The project seems straightforward enough, but apparently, the Leap documentation isn’t the best. Since he worked it out, though, you might find the code useful.

An 8266 runs everything, although you could probably get by with less. The Leap provides more data than the hand has servos, so there was a bit of algorithm development.

We picked up a few tips about building flexible fingers using heated vinyl tubing. Never know when that’s going to come in handy — no pun intended. The cardboard construction isn’t going to be pretty, but a glove cover works well. You could probably 3D print something, too.

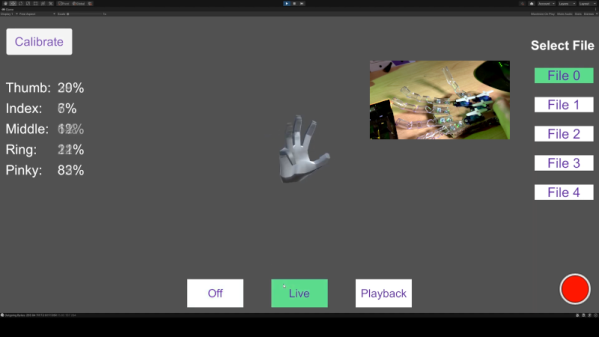

The Unity app will drive the hand live or can playback one of the five recorded routines. You can see how the record and playback work on the video.

This reminded us of another robot hand project, this one 3D printed. We’ve seen more traditional robot arms moving with a Leap before, too. Continue reading “Leap Motion Controls Hands With No Glove”