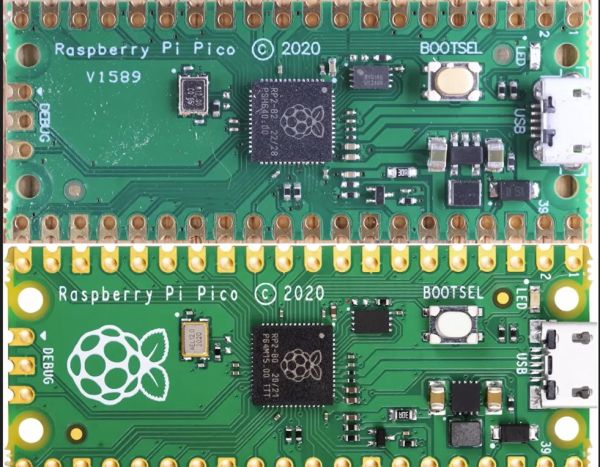

Although [Thomas] really likes the Raspberry Pi Pico 2 and the RP2350 MCU, he absolutely, totally, really doesn’t like the micro-USB connector on it. Hence he jumped on the opportunity to source a Pico 2 clone board with the same MCU but with a USB-C connector from AliExpress. After receiving the new board, he set about comparing the two to see whether the clone board was worth it after all. In the accompanying video you can get even more details on why you should avoid this particular clone board.

In the video the respective components of both boards are analyzed and compared to see how they stack up. The worst issues with the clone Pico 2 board are an improper USB trace impedance at 130 Ω with also a cut ground plane below it that won’t do signal integrity any favors.

There is also an issue with the buck converter routing for the RP2350 with an unconnected pin (VREG_FB) despite the recommended layout in the RP2350 datasheet. Power supply issues continue with the used LN3440 DC-DC converter which can source 800 mA instead of the 1A of the Pico 2 version and performed rather poorly during load tests, with one board dying at 800 mA load.

Continue reading “Comparing A Clone Raspberry Pi Pico 2 With An Original One”