We’re used to seeing technologies move with the times, and it’s likely among Hackaday readers are the group who spend the most time doing that and are most aware of it. There’s one which we’ll all be aware of which has quietly slipped away for most of us almost without a word, I speak of course of 32-bit computing. For most of us that means 32-bit computing on x86 machines, and since the 64-bit x86 instruction set we all now use has been around for nearly a quarter century, its 32-bit ancestor is now ancient history.

In the world of software that means we’re now in an era of operating systems and browsers dropping 32-bit support, so increasingly keeping a 32-bit machine up to date will become a challenge. That sounds like something just painful and difficult enough to subject to a Daily Drivers piece, so just how practical is it to use a 32-bit machine for my daily work in 2026?

2005 Just Gave Me A Computer

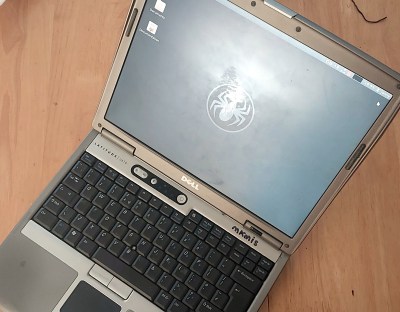

On my desk I have a Dell Latitude D610. It was made in about 2005 in the days when Dells were solidly made, and with its 1.6GHz Pentium M and 2Gb of memory it represents roughly the final throw of the dice for a 32-bit Intel laptop. Just over a year later it would have been replaced by one of the Intel Core series with the 64-bit instructions grudgingly adopted from AMD, but at the time it was a respectably useful machine.

It came into my possession about eight years ago when I used it to test the Revbank bar tab software for my hackerspace, and for the past six years it’s languished unloved in my box there. It’s got an ancient Ubuntu distro on it, so my first task is to pick a 32-bit replacement from 2026. That’s now a dwindling selection, so it’s time to start digging though some minimalist distros. With the supply of those based on mainstream distros drying up as they drop 32-bit support, it’s time to look into more esoteric offerings. This fits well with the ethos of this series, we’re all about the unusual here.

Cutting out the mainstream based distros certainly narrows the field, and out of the promising contenders in the minimalist field, I went for SliTaz. It uses Busybox and the Openbox desktop, that runs from RAM. I was looking for good application support in the repos, and this distro has the things I need. Download it, stick it on a USB stick, and let’s see what it can do. I know one thing, I wouldn’t have been able to download that ISO in five seconds with the internet connection I had in 2005. Continue reading “Jenny’s Daily Drivers: Going 32-Bit With SliTaz In 2026”