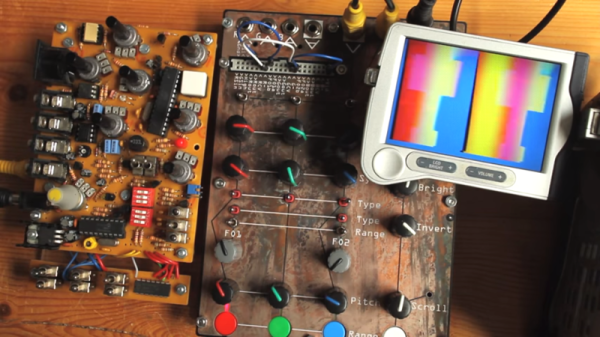

Guitar pedals are designed to take in a sound signal, do fun stuff to it, and then spit it out to your amplifier where it hopefully impresses other people. However, [Liam Taylor] decided to see what would happen if you fed video through a guitar pedal instead.

The device under test is a Boss ME-50 multi-effects unit. It’s capable of serving up a wide range of effects, from delay to chorus to reverb, along with compression and distortion and a smattering of others. [Liam] hooked up the composite video output from an old Sony camcorder from the 2000s to a 3.5 mm audio jack, and plugged it straight into the auxiliary input of the ME-50 (notably, not the main guitar input of the device).

The multi-effects pedal isn’t meant to work with an analog video signal, but it can pass it through and do weird things to it regardless. Using the volume pedal on the ME-50 puts weird lines on the signal, while using a wah effect makes everything a little wobbly. [Liam] then steps through a whole range of others, like ring modulation, octave effects, and reverb, all of which do different weird things to the visuals. Particularly fun are some of the periodic effects which create predictable variation to the signal. True to its name, the distortion effect did a particularly good job of messing things up overall.

It’s a fun experiment, and recalls us of some of the fantastic analog video synths of years past. Video after the break.

Continue reading “Running Video Through A Guitar Effects Pedal”