There are many people who find being around insects uncomfortable. This is understandable, and only likely to get worse as technology gives these multi-legged critters augmented bodies to roam around with. [tech_support], for one, welcomes our new arthropod overlords, and has even built them a sweet new ride to get around in.

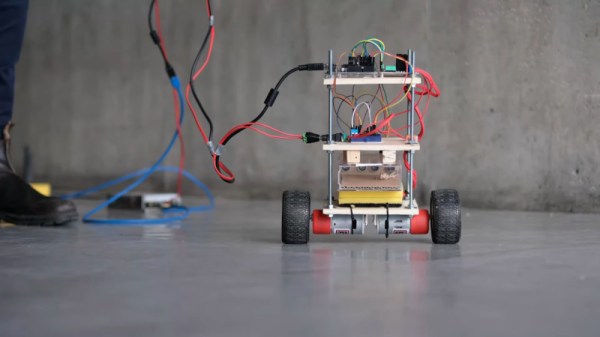

The build follows the usual hallmarks of a self-balancing bot, with a couple of interesting twists. There’s twin brushed motors for drive, an an Arduino Uno running the show. Instead of the more usual pedestrian IMUs however, this rig employs the Bosch BNO055 Absolute Orientation Sensor. This combines a magnetometer, gyroscope, and accelerometer all on a single die, and handles all the complicated sensor fusion maths onboard. This allows it to output simpler and more readily usable orientation data.

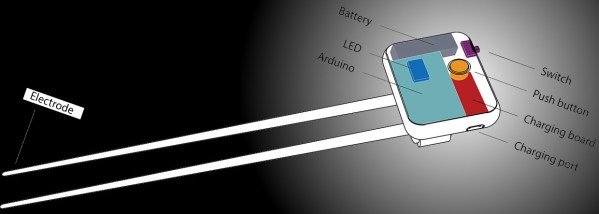

The real party piece is even more interesting, however. Rather than radio control or a line following algorithm, this self-balancer instead gets its very own insect pilot. The insect is placed in a small chamber with ultrasonic sensors used to determine its position. The insect may then control the movement of the bot by moving around this chamber itself. The team have even developed a variety of codes to dial in the sensor system for different types of insect.

It’s not the first time we’ve seen insects augmented with robotic hardware, and we doubt it will be the last. If you’re working on a mad science project of your own, drop us a line. Video after the break.

Continue reading “Augmented Arthropod Gets A Self-Balancing Ride”