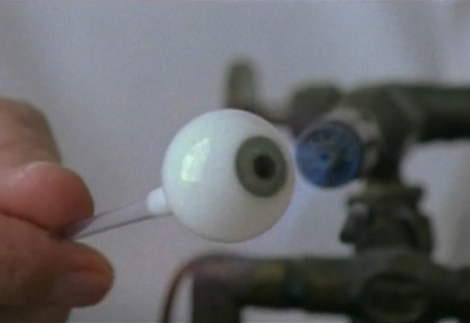

Here’s a story of an ocularist who makes prosthetic eyes from glass. Obviously here’s a necessary and important service, but we find it surprising that this seems something of a dying art. [Mr. Haas] lives in the UK but notes that most glass eye makers have been German, and tend to pass the trade down to their children. With that father-to-son daughter transfer of knowledge becoming less common these days we wonder just how many people know how to do this any longer.

But don’t despair, it’s not that there won’t be a source for ocular prosthesis, as acrylic eyes are quite common. But what we see in the video after the break is breathtaking and we hate to see the knowledge and experience lost the way vacuum tube manufacture and even common blacksmithing have.