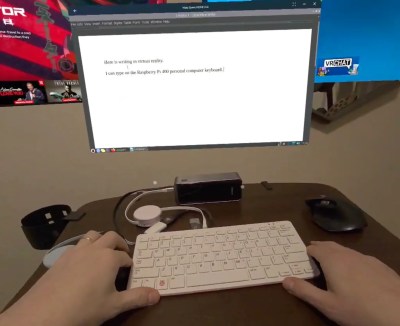

[Chris] had an idea. When playing VR games like BeatSaber, he realized that spectators without headsets weren’t very included in the action. He wanted to create some environmental lighting that would make everyone feel more a part of the action. He’s taken the first steps towards that goal, interfacing SteamVR controllers with addressable LEDs.

Armed with Python, OpenVR, and some help from ChatGPT, [Chris] got to work. He was soon able to create a mapping utility that let him create a virtual representation of where his WLED-controlled LED strips were installed in the real world. Once everything was mapped out, he was able to set things up so that pointing the controller to a given location would light the corresponding LED strips. Wave at the windows, the strips on that wall light up. Wave towards the other wall, the same thing happens.

Right now, the project is just a proof of concept. [Chris] has enabled basic interactivity with the controllers and lights, he just hasn’t fully built it out or gamified it yet. The big question is obvious, though—can you use this setup while actually playing a game?

“I just found the OpenVR function/object that allows it to act as an overlay, meaning it can function while other games are working,” [Chris] told me. “My longer term goals would be trying to interface more with a game directly such as BeatSaber, and the light in the room would correspond with the game environment.”

Continue reading “SteamVR Controller Controlling Addressable LEDs”