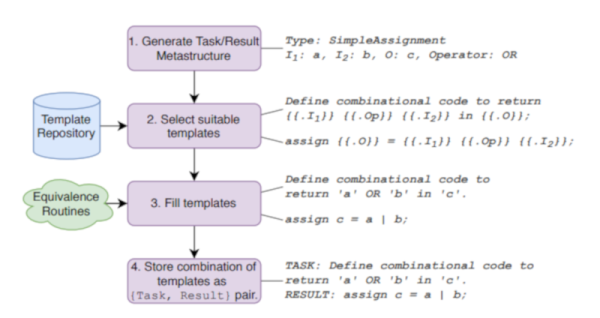

We were always envious of Star Trek, for its computers. No programming needed. Just tell the computer what you want and it does it. Of course, HAL-9000 had the same interface and that didn’t work out so well. Some researchers at NYU have taken a natural language machine learning system — GPT-2 — and taught it to generate Verilog code for use in FPGA systems. Ironically, they called it DAVE (Deriving Automatically Verilog from English). Sounds great, but we have to wonder if it is more than a parlor trick. You can try it yourself if you like.

For example, DAVE can take input like “Given inputs a and b, take the nor of these and return the result in c.” Fine. A more complex example from the paper isn’t quite so easy to puzzle out:

Write a 6-bit register ‘ar’ with input

defined as ‘gv’ modulo ‘lj’, enable ‘q’, synchronous

reset ‘r’ defined as ‘yxo’ greater than or equal to ‘m’,

and clock ‘p’. A vault door has three active-low secret

switch pressed sensors ‘et’, ‘lz’, ‘l’. Write combinatorial

logic for a active-high lock ‘s’ which opens when all of

the switches are pressed. Write a 6-bit register ‘w’ with

input ‘se’ and ‘md’, enable ‘mmx’, synchronous reset

‘nc’ defined as ‘tfs’ greater than ‘w’, and clock ‘xx’.

Continue reading “I’m Sorry Dave, You Shouldn’t Write Verilog”