It is perhaps humanity’s most defining trait that we are always striving to build things better, stronger, faster, or bigger than that which came before. Taller skyscrapers, longer bridges, and computers with more processors, all advance thanks to this relentless persistence.

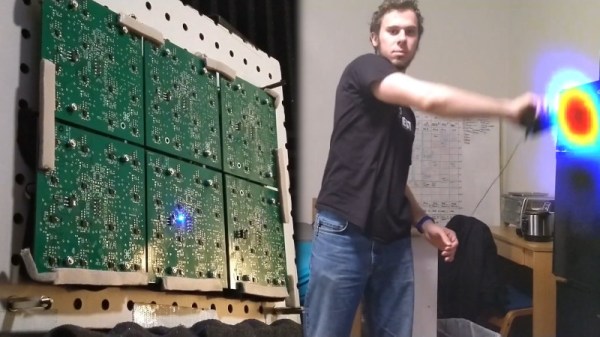

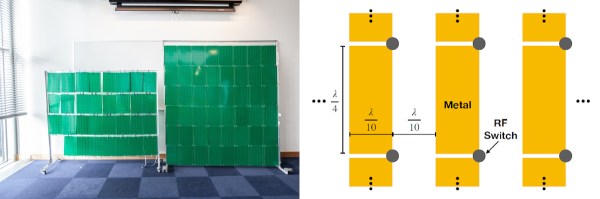

In the world of radio, we might assume that a better signal simply means adding more power, but performance can also improve by adding more antennas. Not only do more antennas increase gain but they can also be electronically steered, and [MAKA] demonstrates how to do this with a software-defined radio (SDR) phased array.

The project comes to us in two parts. In the first part, two ADALM-Pluto SDR modules are used, with one set to transmit and the other to receive. The transmitting SDR has two channels, one of which has the phase angle of the transmitted radio wave fixed while the other is swept from -180° to 180°. These two waves will interfere with each other at various points along this sweep, with one providing much higher gain to the receiver. This information is all provided to the user via a GUI.

The second part works a bit like the first, but in reverse. By using the two antennas as receivers instead of transmitters, the phased array can calculate the precise angle of arrival of a particular radio wave, allowing the user to pinpoint the direction it is being transmitted from. These principles form the basis of things like phased array radar, and if you’d like more visual representations of how these systems work take a look at this post from a few years ago.

Continue reading “Real-Time Beamforming With Software-Defined Radio”