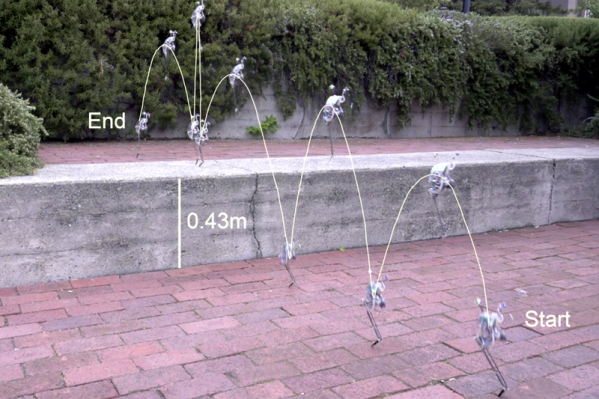

At first, we thought this robot was like a rabbit until we realized rabbits have a 300% bonus in the leg department. SALTO — a robot from [Justin Yim], [Eric Wang], and [Ronald Fearing] only has one leg but gets around quite well hopping from place to place. If you can’t picture it, the video below will make it very obvious.

According to the paper about SALTO, existing hopping robots require external sensors and often are tethered. SALTO is self-contained. The robot weighs a tenth of a kilogram and takes its name from the word saltatorial (adapted for leaping ) which itself comes from the Latin saltare which means to jump or leap.