[Notch], the guy behind Minecraft, is currently working on a new game called 0x10c. This game includes an in-game 16-bit computer called the DCPU that hearkens back to the 1980s microcomputers with really weird hardware architecture. [Benedek] thought it would be a great idea to turn his ThinkPad into a DCPU, so he wrote a bootable x86 emulator for the DCPU that is fully compliant with the current DCPU spec.

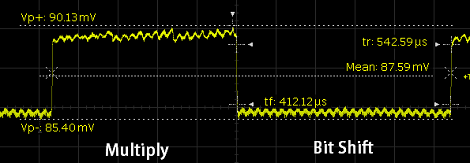

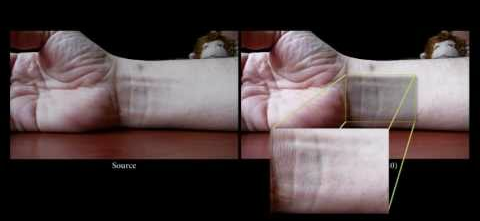

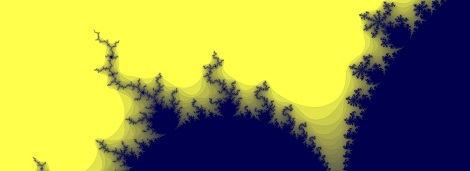

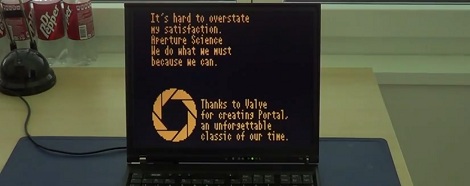

This bootable DCPU emulator comes from the fruitful workshop of [Benedek], the brains behind drawing fractals on the DCPU, emulating bit-flipping radiation, and even putting the Portal end credits inside [notch]’s 0x10c computer.

[Benedek] wrote this new in x86 assembly, allowing it to be booted without an OS from a USB flash drive on any old laptop. This allows for direct hardware communication for everything implemented for the DCPU so far.

If you’d like to run your bare-metal DCPU, [Benedek] made all the files avaiable. Since the entire emulator is only 1800 lines of x86 assembly, it’s possible to load this off a floppy disk; an ancient tech we’ll be seeing in [notch]’s new game.

Oh. One more thing. When we were introduced to 0x10c, we said we’ll be holding a contest for the best hardware implementation of the DCPU. We’re still waiting on some of the hardware specs to be released (hard drives and the MIDI-based serial interface), so we’ll probably be holding that when there is a playable alpha release. [Benedek]’s bootable emulator is a great start, though.