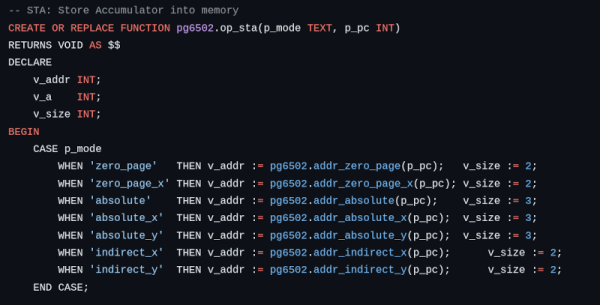

Emulating a 6502 shouldn’t be that hard on a modern computer. Maybe that’s why [lasect] decided to make it a bit harder. The PG_6502 emulator uses PostgreSQL. All the CPU resources are database tables, and all opcodes are stored procedures. Huh.

The database is pretty simple. The pg6502.cpu table has a single row that holds the registers. Then there is a pg6502.mem table that has 64K rows, each representing a byte. There’s also a pg6502.opcode_table that stores information about each instruction. For example, the 0xA9 opcode is an immediate LDA and requires two bytes.

The pg6502.op_lda procedure grabs that information and updates the tables appropriately. In particular, it will load the next byte, increment the program counter, set the accumulator, and update the flags.

Honestly, we’ve wondered why more people don’t use databases instead of the file system for structured data but, for us, this may be a bit much. Still, it is undoubtedly unique, and if you read SQL, you have to admit the logic is quite clear.

We can’t throw stones. We’ve been known to do horrible emulators in spreadsheets, which is arguably an even worse idea. We aren’t the only ones.