You may not have noticed, but so-called “artificial intelligence” is slightly controversial in the arts world. Illustrators, graphics artists, visual effects (VFX) professionals — anybody who pushes pixels around are the sort of people you’d expect to hate and fear the machines that trained on stolen work to replace them. So, when we heard in a recent video that [Niko] of Corridor Digital had released an AI VFX tool, we were interested. What does it look like when the artist is the one coding the AI?

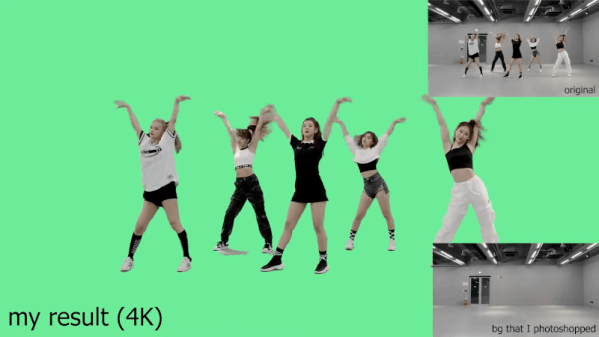

It looks amazing, both visually and conceptually. Conceptually, because it takes one of the most annoying parts of the VFX pipeline — cleaning up chroma key footage — and automates it so the artists in front of the screen can get to the fun parts of the job. That’s exactly what a tool should do: not do the job for them, but enable them to enjoy doing it, or do it better. It looks amazing visually, because as you can see in the embedded video, it works very, very well.

Continue reading “CorridorKey Is What You Get When Artists Make AI Tools”