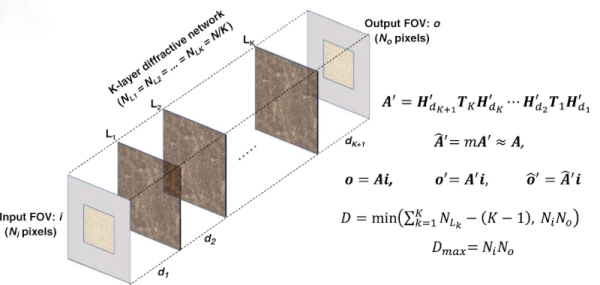

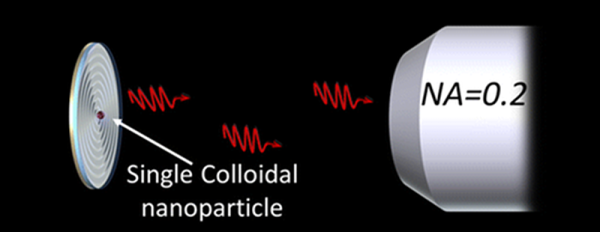

We always hear that future computers will use optical technology. But what will that look like for a general-purpose computer? German researchers explain it in a recent scientific paper. Although the DOC-II used optical processing, it did use some conventional electronics. The question is, how can you construct a general computer that uses only optical technology?

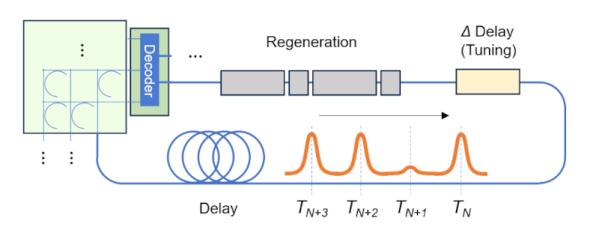

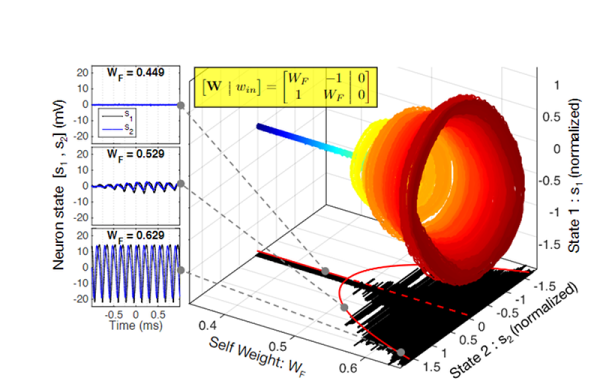

The paper outlines “Miller’s criteria” for practical optical logic gates. In particular, any optical scheme must provide outputs suitable for introduction to another gate’s inputs and also support fan out of one output to multiple inputs. It is also desirable that each stage does not propagate signal degradation and isolate its outputs from its inputs. The final two criteria note that practical systems don’t depend on loss for information representation since this isn’t reliable across paths, and, similarly, the gates should require high-precision adjustment to work correctly.

The paper also identifies many misconceptions about new computing devices. For example, they assert that while general-purpose desktop-class CPUs today contain billions of devices, use a minimum of 32-bits of data path, and contain RAM, this isn’t necessarily true for CPUs that use different technology. If that seems hard to believe, they make their case throughout the paper. We can’t remember the last scientific paper we read that literally posed the question, “Will it run Doom?” But this paper does actually propose this as a canonical question.

Continue reading “An Optical Computer Architecture” →