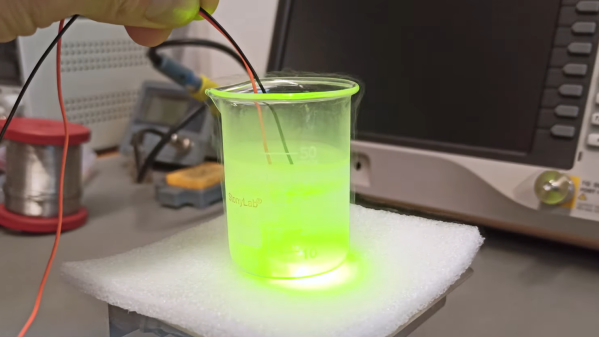

Detecting single photons can be seen as the backbone of cutting-edge applications like LiDAR, medical imaging, and secure optical communication. Miss one, and critical information could be lost forever. That’s where FPGA-based instrumentation comes in, delivering picosecond-level precision with zero dead time. If you are intrigued, consider sitting in on the 1-hour webinar that [Dr. Jason Ball], engineer at Liquid Instruments, will host on April 15th. You can read the announcement here.

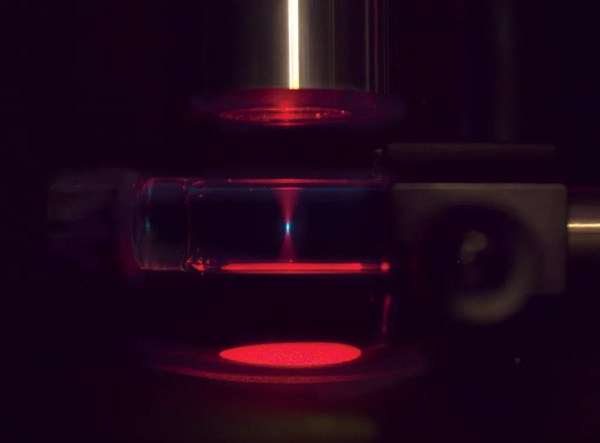

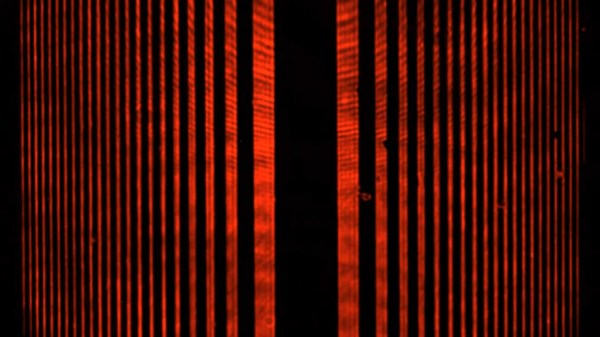

Before you sign up and move on, we’ll peek into a bit of the matter upfront. The power lies in the hardware’s flexibility and speed. It has the ability to timestamp every photon event with a staggering 10 ps resolution. That’s comparable to measuring the time it takes light to travel just a few millimeters. Unlike traditional photon counters that choke on high event rates, this FPGA-based setup is reconfigurable, tracking up to four events in parallel without missing a beat. From Hanbury-Brown-Twiss experiments to decoding pulse-position modulated (PPM) data, it’s an all-in-one toolkit for photon wranglers. [Jason] will go deeper into the subject and do a few live experiments.

Measuring single photons can be achieved with photomultipliers as well. If exploring the possibilities of FPGA’s is more your thing, consider reading this article.