![The future of healthy indoor plants, courtesy of AI. (Credit: [Liam])](https://hackaday.com/wp-content/uploads/2025/04/plantmom_test_setup_with_plant.jpg?w=400)

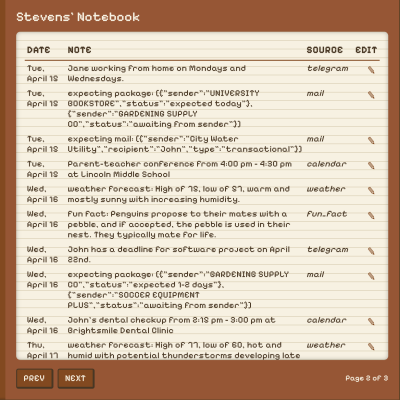

Since LLMs so far don’t come with physical appendages by default, some hardware had to be plugged together to measure parameters like light, temperature and soil moisture. Add to this a grow light and a water pump and all that remained was to tell the LMM using an extensive prompt, containing Python code, what it should do (keep the the plant alive), and what Python methods are available. All that was left now was to let the Google’s Gemma 3 handle it.

To say that this resulted in a dramatic failure along with what reads like an emotional breakdown on the part of the LLM would be an understatement. The LLM insisted on turning the grow light on when it should be off and had the most erratic watering responses imaginable based on absolutely incorrect interpretations of the ADC data, flipping dry and wet. After this episode the poor chili plant’s soil was absolutely saturated and is still trying to dry out, while the ongoing LLM experiment, with an empty water tank, has the grow light blasting more often than a weed farm.

So far it seems like that the humble state machine’s job is still safe from being taken over by ‘AI’, and not even brown thumb folk can kill plants this efficiently.