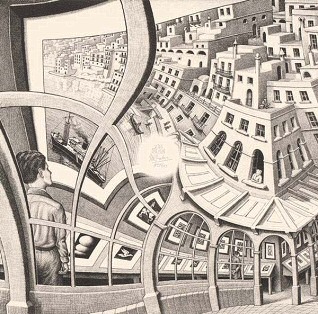

Self-similar images are rather common, which are images in which the same image is repeated on a smaller scale somewhere within the image that one is looking at, something which is also referred to as the Droste effect. Yet in [MC Escher]’s 1956 Prentententoonstelling (‘picture gallery’) drawing, this self-similar image is somehow also the foreground image, from where it just keeps looping around in an endless dance. How this effect is accomplished and what the mathematical transformations behind it are and how they work is explained in a recent video by [3Blue1Brown].

Self-similar images are rather common, which are images in which the same image is repeated on a smaller scale somewhere within the image that one is looking at, something which is also referred to as the Droste effect. Yet in [MC Escher]’s 1956 Prentententoonstelling (‘picture gallery’) drawing, this self-similar image is somehow also the foreground image, from where it just keeps looping around in an endless dance. How this effect is accomplished and what the mathematical transformations behind it are and how they work is explained in a recent video by [3Blue1Brown].

The video uses previous work by [B. de Smit] and [H. W. Lenstra Jr] whose 2003 paper detailed the underlying transformations, as well as the mystery of the center of the work.

Although [MC Escher] created a transformation grid with square rectangles into which a non-transformed image could be copied verbatim, he left the center as a void with just his signature in it, leaving many to guess how one might be able to fill in this area with something that made sense. In the work by [Smit] et al. it was postulated that by treating the work as having been drawn on an elliptic curve over a field of complex numbers this might be possible.

While the transformation is simple enough at first, with just four rectangles at different zoom levels to make up the corners, the trick is to connect these rectangles. Using the demonstrated complex method this can be automated, with the central void now filled in and creating its own Droste effect. This once again demonstrates the beautifully complex mathematics in [Escher]’s works, despite him never having had any formal mathematical education.

Continue reading “The Complex Transformations Underlying MC Escher’s Works”