There are a lot of single board computers on the market these days, so you can be forgiven if you missed the LuckFox Lyra. Its main claim to fame seems to be that it shares the Pi Pico’s 51 mm x 21 mm footprint while being powerful enough to run a full Linux system– or at least, it was. Now its claim to fame is as a device you can interact with no peripherals, accessing the terminal via Morse code. That’s thanks to [Gabriel Broussard Korr] and his Morstdin project, which should run on just about anything POSIX-compliant, by dint of a being a clever sh script at heart.

Of course, with most POSIX-compliant systems, you’ll need to alter the script to account for some kind of periferal to do the Morse I/O– not so on the LuckFox Lyra, which has a built-in LED and a single usable button. It actually has two buttons, but one of them is RESET and you can’t use that for anything but its intended purpose. The BOOT button, on the other hand, becomes user input after the system has started. One button, one LED? It’s almost like LuckFox designed this SBC for Morse! Admittedly we’d prefer an audible output, but adding a buzzer would detract from the purity of this implementation.

He’s had to extend the code, of course, since Samuel Morse did not expect all of the special characters you’re likely to encounter on the terminal. The resultant Programmer’s Morse, or PMorse is a straightforward extension, but [Gabriel] didn’t stop there: he’s also added a set of commands he describes as “vim-like” make using this headless device easier by doing things like deleting whole words or flash the line you’re working on so you can make sure you haven’t made any errors.

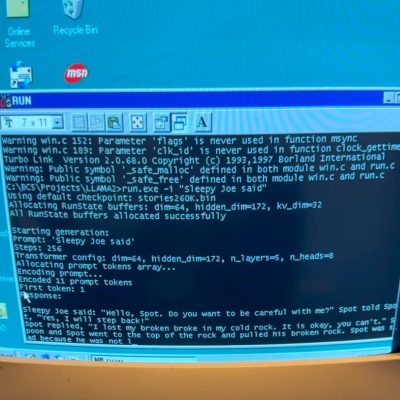

If that wasn’t enough, he’s also put an LLM on it. Because in the Year of Our Lord 2026, you apparently cannot escape the frakkin’ toasters by jumping your rag-tag fleet into the 128 MB of RAM on this tiny SBC. Still, his inclusion of Llamma.cpp does add one thing to the project: it can now claim to be the world’s smallest stand-alone chatbot. It’s also the only one that speaks Morse. That’s got to be worth some bragging rights.

[Gabriel] may have a thing for physically tiny Linux devices– his last project, which we featured, was about using Linux on old smartphones with Termux.

Thanks to [Gabriel] for the tip!

Header image credit Luckfox.