For those who haven’t been following along, [BPS.space] aka [Joe] is on a journey to launch a home-built rocket past the line where it will officially reach outer space. But one does not simply launch a rocket to outer space on the first try. The process is long and involves not only building a series of rockets, but designing and building propellant mixtures, solving aerodynamic problems, gaining several model rocket certifications along the way, and a whole host of other steps. He’s also documenting the entire process on video as well, which involves some custom camera work like this rocket selfie camera which will take an image of his rockets at apogee.

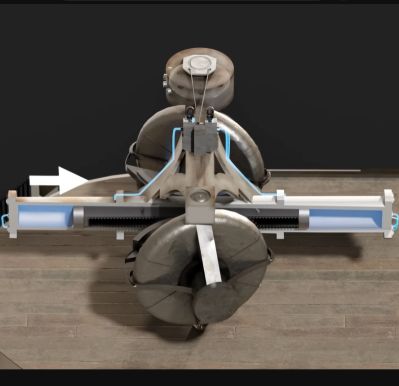

Like most problems in high-power rocketry, extremely tiny problems have a way of causing catastrophic failure, so every detail needs to be considered and planned for in the final design. For a camera that needs to jettison itself from the rocket at a precise moment after experiencing an incredible amount of forces, this is a complicated problem to solve. The initial design involves building a sled for a small deconstructed GoPro which uses springs and a servo to launch itself out of the rocket. The major problem with the design is that even the smallest torque on the sled will cause the camera to point in a random direction by the time it’s far enough from the rocket to take a picture. [Joe] tried a number of design iterations but could not get these torques to vanish.

One of the design limitations with this camera is that it won’t have any sort of parachute or tether itself to the rocket, so it will hit the ground at its terminal velocity. To keep that velocity down and improve survivability chances of the footage, the mass has to stay low. Eventually he settled on a semi-active control system by mounting a brass weight on a small motor, giving the camera module enough stability to stay pointed at the rocket long enough to take the video. Even though it hasn’t flown yet, admitting his first design wasn’t working at compromising on this solution which adds a bit of mass seems to be a good design change. We’ve been following along with his entire process so be sure to check out his actual rocket motor builds and teardowns as well.

Continue reading “Photographing Rocket Chute Deployment At 10 Km”