In the early days of aviation, pilots or their navigators used a plethora of tools to solve common navigation and piloting problems. There was definitely a need for some kind of computing aid that could replace slide rules, tables, and tedious dead-reckoning computations. This would become even more important during World War II, when there was a massive push to quickly train young men to be pilots.

Today, we’d whip up some sort of computer device, but in the 1930s, computers weren’t anything you’d cram on a plane, even if they’d had any. For example, the Mark 1 Fire Control Computer during WW2 was 3,000 pounds of gears and motors.

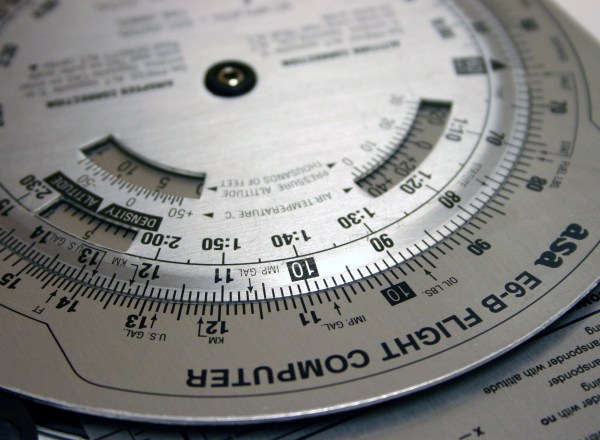

The computer is made to answer flight questions like “how many pounds of fuel do I need for another hour of flying time?” or “How do I adjust my course if I have a particular crosswind?”

History

There were a rash of flight computers starting in the 1920s that were essentially specialized slide rules. The most popular one appeared in the late 1930s. Philip Dalton’s circular slide rule was cheap to produce and easy to use. As you’ll see, it is more than just an ordinary slide rule. Keep in mind, these were not computers in the sense we think of today. They were simple slide rules that easily did specialized math useful to pilots.

Dalton actually developed a number of computers. The popular Model B appeared in 1933, and there were refinements leading to additional models. The Mark VII was very popular. Even Fred Noonan, Amelia Earhart’s navigator, used a Mark VII. Continue reading “The Zero-Power Flight Computer”