Although Windows CE doesn’t use the NT kernel, it’s similarly designed to run on a wide variety of system architectures. Since the Nintendo 64 uses a MIPS CPU it should basically just run either kernel. You might assume that the N64’s rather limited specs are a bit of a problem, but fortunately Windows CE is designed to run on a digital potato, and requires only a MB of RAM. Since that just so happens to be what the N64 has under the hood, [Throaty Mumbo] was optimistic about getting Windows CE running on the 1990s game console.

The idea for this project came when [Throaty] was tinkering with an IBM Workpad Z50 laptop that uses almost the same CPU as the N64 and also runs Windows CE. Although said laptop is probably a lot more practical of a platform to run Windows on, this didn’t mean that it wouldn’t be a fun challenge.

Since CE was intended to be customized by companies for their own embedded hardware this means that you can use an official SDK, such as Microsoft Windows CE 2.11 Platform Builder. Making Windows CE 2.11 run on an N64 thus involves creating a board-specific configuration and compile that against said SDK.

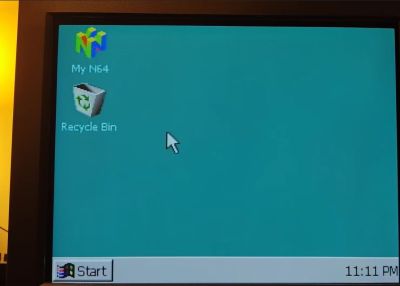

If you want to give it a shot yourself, the entire project is available on GitHub which is where you find most of the technical details as well. When using a flash cart such as the EverDrive, you can also put applications on the SD card and run them from within the Windows GUI. You’ll still be limited by the N64 hardware, but otherwise the experience is very smooth as the video below demonstrates.