Most of the time, you’ll know where your cats are — asleep on the bed about 23.5 hours a day and eating or pooping the rest of the time. But some cats are more active than others, so there’s commercial options for those who want to keep tabs on their pet. Unfortunately, [Sahas Chitlange] didn’t like any of them, so he designed and built his own open source version: FindMyCat.io.

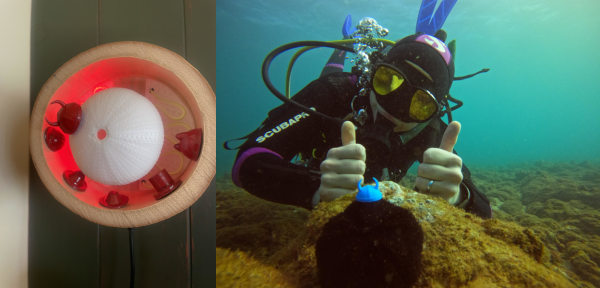

The system is in two parts: a module that fits onto a cat collar, and a home station that, well, stays at home. It offers a variety of tracking modes. In home mode, the home station signals the collar every 10 seconds, which stays in a deep sleep most of the time. If the collar doesn’t get a signal from the home station, it switches to ping mode, where it will wait for a signal from the FindMyCat over the LTE-M connection and report its location.

The system is in two parts: a module that fits onto a cat collar, and a home station that, well, stays at home. It offers a variety of tracking modes. In home mode, the home station signals the collar every 10 seconds, which stays in a deep sleep most of the time. If the collar doesn’t get a signal from the home station, it switches to ping mode, where it will wait for a signal from the FindMyCat over the LTE-M connection and report its location.

Finally, the app can set the collar to Lost Kitteh mode, where the collar will send a location to the app every seven minutes or thirty seconds. The collar also supports a direction-finding feature, using the ultra wideband (UWB) feature of recent Apple iPhones to point you in the direction and distance of the tracked cat.

The collar is built around a Nordic Semiconductor NRF-9160, a System in a Package (SiP) that does most of the heavy lifting as it includes GPS, an LTE-M modem, and an ARM processor. One interesting feature here: [Sahas] doesn’t make his antennas on the PCB, but instead uses an Ignion NN03-310, an off-the-shelf antenna that is already qualified for LTE-M use. That means this system can be connected to almost any LTE-M network without getting yelled at for using unqualified hardware and making the local cell towers explode.

The collar also includes a DWM3001CDK ultrawideband (UWB) module used for the locator feature. The accompanying app uses this and Apple’s UWB support to show the user which direction the cat is in, and how far away it is. The app isn’t in the Apple App Store yet, so you’ll need to sign up for an Apple Developer account to use it. We’d love to hear from anyone who takes it for a test drive with their own pet.

Continue reading “Open Source Tracker Keeps An Eye On Furry Friends”