Neural interfaces have made great strides in recent years, but still suffer from poor longevity and resolution. Researchers at the University of Cambridge have developed a biohybrid implant to improve the situation.

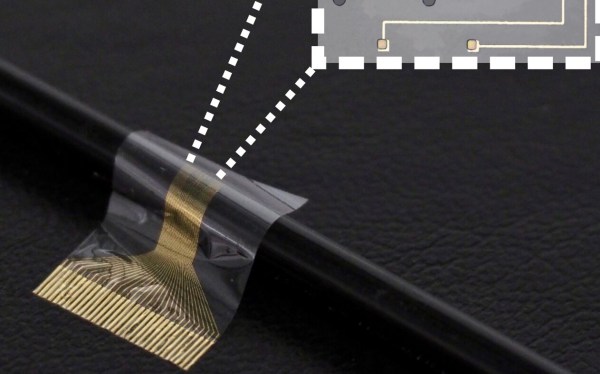

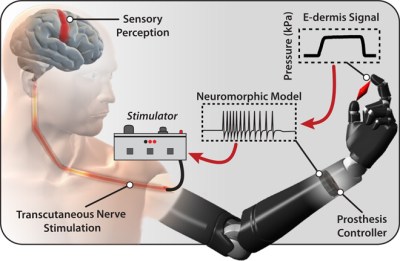

As we’ve seen before, interfacing electronics and biological systems is no simple feat. Bodies tend to reject foreign objects, and transplanted nerves can have difficulty assuming new roles. By combining flexible electronics and induced pluripotent stem cells into a single device, the researchers were able to develop a high resolution neural interface that can selectively bind to different neuron types which may allow for better separation of sensation and motor signals in future prostheses.

As is typically the case with new research, the only patients to benefit so far are rats and only on the timescale of the study (28 days). That said, this is a promising step forward for regenerative neurology.

We’re no strangers to bioengineering here. Checkout how you can heal faster with electronic bandages or build a DIY vibrotactile stimulator for Coordinated Reset Stimulation (CRS).

(via Interesting Engineering)