As Tom Nardi mentioned in this week’s podcast, the Northeast US is pretty apocalyptically socked in with smoke from wildfires in Canada. It’s what we here in Idaho call “August,” so we have plenty of sympathy for what they’re going through out there. People are turning to technology to ease their breathing burden, with reports that Tesla drivers are activating the “Bioweapon Defense Mode” of their car’s HVAC system. We had no idea this mode existed, honestly, and it sounds pretty cool — the cabin air system apparently shuts off outside air intake and runs the fan at full speed to keep the cabin under positive pressure, forcing particulates — or, you know, anthrax — to stay outside. We understand there’s a HEPA filter in the mix too, which probably does a nice job of cleaning up the air in the cabin. It’s a clever idea, and hats off to Tesla for including this mode, although perhaps the name is a little silly. Here’s hoping it’s not one of those subscription services that can get turned off at a moment’s notice, though.

Rapsberry Pi4 Articles

Surf’s Up, A Styrofoam Ball Rides The Waves To Create A Volumetric Display

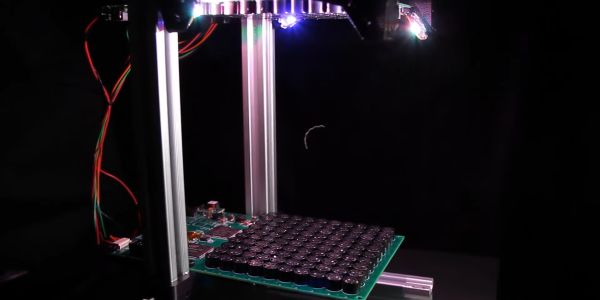

We are big fans of POV displays, particularly ones that move into 3D. To do so, they need to move even faster than their 2D cousins. [danfoisy] built a volumetric display that doesn’t move LEDs or any other digital display through space, or project light onto a moving surface. All that moves here is a bead of styrofoam and does so at up to 1 meter per second. Having low mass certainly helps when trying to hit the brakes, but we’re getting ahead of ourselves.

[danfoisy] and son built an acoustic levitator kit from [PhysicsGirl] which inspired the youngster’s science fair project on sound. See the video by [PhysicsGirl] for an explanation of levitation in a standing wave. [danfoisy] happened upon a paper in the Journal Nature about a volumetric display that expanded this one-dimensional standing wave into three dimensions. The paper described using a phased array of ultrasonic transducers, each with a 40 kHz waveform.

After reading the paper and determining how to recreate the experiment, [danfoisy] built a 2D simulation and then another in 3D to validate the approach. We are impressed with the level of physics and programming on display, and that the same code carried through to the build.

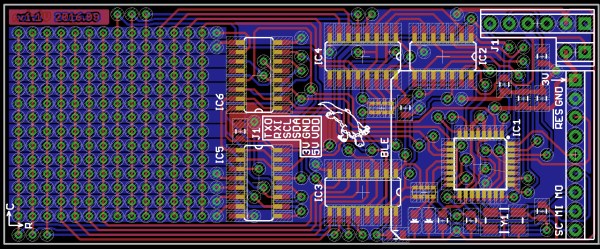

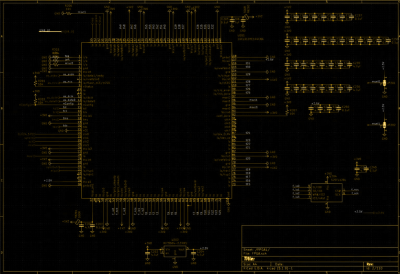

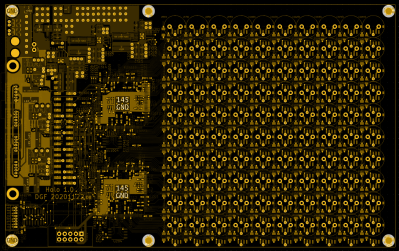

[danfoisy] didn’t stop with the simulations, designing and building control boards for each 100 x 100 10 x 10 grid of transducers. Each grid is driven by 2 Intel Cyclone FPGAs and all are fed 3D shapes by a Raspberry Pi Zero W. The volume of the display is 100 mm x 100 mm x 145mm and the positioning of the foam ball is accurate down to .01 mm though currently there is considerable distortion in the positioning.

Check out the video after the break to see the process of simulating, designing, and testing the display. There are a number of tips along the way, including how to test for the polarity of the transducers and the use of a Python script to place the grids of transducers and drivers in KiCad.

Continue reading “Surf’s Up, A Styrofoam Ball Rides The Waves To Create A Volumetric Display”

Merlin Pi Camera Is A Photographic Wizard

[Mister M] was quite excited to mess around with the new high-quality Raspberry Pi camera and build a project around it. Unfortunately, lockdown forced him to rummage through old tech on hand rather than hunting down a fresh eye-catching enclosure out in the wild. We spent many hours playing with one of these Merlin toys whenever six AA batteries could be spared to feed the matrix of hungry 1970s LEDs, so we would argue that [Mister M] should explore his personal stores more often.

Before we forget — it’s cool; this one was already broken. The Merlin Pi camera’s wizardry works on two levels — [Mister M] can take still pictures and record video through the GUI he built for the touchscreen, or go retro and use the little push buttons nestled in the Merlin control panel. [Mister M] worked a Dropbox uploader into the GUI, so he doesn’t have to worry about filling up the SD card with backyard bird movies in the middle of filming them.

[Mister M] says he accidentally warped the Merlin’s battery cover while trying to soak away the sticker and had to use a piece of acrylic. Although it’s unfortunate, we think it may have been for the better given the huge hole necessitated by the camera lens. Check out the build video after the break.

If you hadn’t heard about this beefy new camera module until now, our own [Jenny List] brought it into focus a couple months back and more recently had a go at hacking with it herself.

Continue reading “Merlin Pi Camera Is A Photographic Wizard”

Hackaday Prize Entry: Tongue Vision

Visually impaired people know something the rest of us often overlooks: we actually don’t see with our eyes, but with our brains. For his Hackaday Prize entry, [Ray Lynch] is building a tongue vision system, that will help blind people to see through one of the human brain’s auxiliary ports: the taste buds.