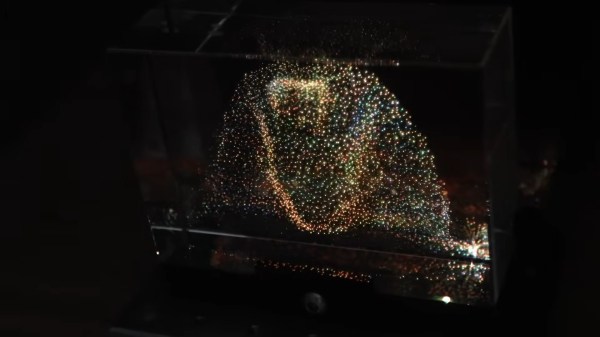

There’s something delightfully sci-fi about any kind of volumetric display. Sure, you know it’s not really a hologram, and Princess Leia isn’t about to pop out and tell you you’re her only hope, but nothing says “this is the future” like an image floating before you in 3D. [Matthew Lim] has put together an interesting one, using persistence-of-vision and linear motion.

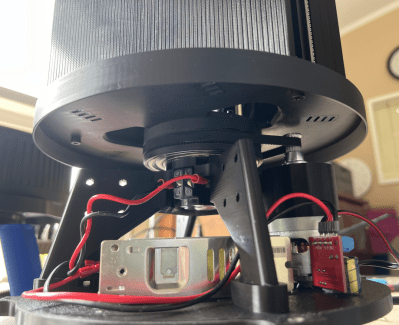

The basic concept is so simple we’re kind of surprised we don’t see it more often. Usually, POV displays use rotary motion: on a fan, a globe, a disk, or even a drone, we’ve seen all sorts of spinning LEDs tricking the brain into thinking there’s an image to be seen. [Matthew’s] is apparently the kind of guy who sticks to the straight-and-narrow, on the other hand, because his POV display uses linear motion.

An ESP32-equipped LED matrix module is bounced up by an ordinary N20 motor that’s equipped with an encoder and driven by a DRV8388. Using an encoder and the motor driver makes sure that the pixels on the LED matrix are synced perfectly to the up-and-down motion, allowing for volumetric effects. This seems like a great technique, since it eliminates the need for slip rings you might have with rotary POV displays. It does of course introduce its own challenges, given that inertia is a thing, but I think we can agree the result speaks for itself.

One interesting design choice is that the display is moved by a simple rack-and-pinion, requiring the motor to reverse 16 times per second. We wonder if a crank wouldn’t be easier on the hardware. Software too, since [matthew] has to calibrate for backlash in the gear train. In any case, the stroke length of 20 mm creates a cubical display since the matrix is itself 20 mm x 20 mm. (That’s just over 3/4″, or about twice the with of a french fry.) In that 20 mm, he can fit eight layers, so not a great resolution on the Z-axis but enough for us to call it “volumetric” for sure. A faster stroke is possible, but it both reduces the height of the display and increases wear on the components, which are mostly 3D printed, after all.

It’s certainly an interesting technique, and the speechless (all subtitles) video is worth watching– at least the first 10 seconds so you can see this thing in action.

Thanks to [carl] for the tip. If a cool project persists in your vision, do please let us know. Continue reading “Volumetric Display Takes A Straight Forward (and Backward) Approach” →