By now we’ve all seen the cheap headsets that essentially stick a smartphone a few inches away from your face to function as a low-cost alternative to devices like Oculus Rift. Available for as little as a few dollars, it’s hard to beat these gadgets for experimenting with VR on a budget. But what about if you’re more interested in working with augmented reality, where rendered images are superimposed onto your real-world view rather than replacing it?

As it turns out, there are now cheap headsets to do that with your phone as well. [kvtoet] picked one of these gadgets up for $30 USD on AliExpress, and used it as a base for a more capable augmented reality experience than the headset alone is capable of. The project is in the early stages, but so far the combination of this simple headset and some hardware liberated from inexpensive Chinese smartphones looks to hold considerable promise for delivering a sub-$100 USD development platform for anyone looking to jump into this fascinating field.

As it turns out, there are now cheap headsets to do that with your phone as well. [kvtoet] picked one of these gadgets up for $30 USD on AliExpress, and used it as a base for a more capable augmented reality experience than the headset alone is capable of. The project is in the early stages, but so far the combination of this simple headset and some hardware liberated from inexpensive Chinese smartphones looks to hold considerable promise for delivering a sub-$100 USD development platform for anyone looking to jump into this fascinating field.

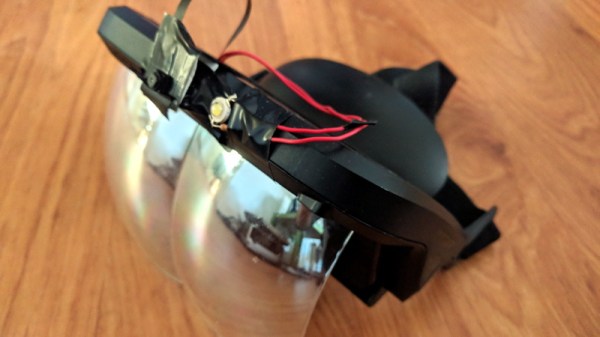

On their own, these cheap augmented reality headsets simply show a reflection of your smartphone’s screen on the inside of the lenses. With specially designed applications, this effect can be used to give the wearer the impression that objects shown on the phone’s screen are actually in their field of vision. It’s a neat effect to be sure, but it doesn’t hold much in the way of practical applications. To turn this into a useful system, the phone needs to be able to see what the wearer is seeing.

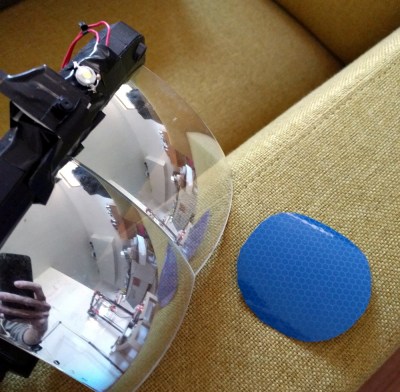

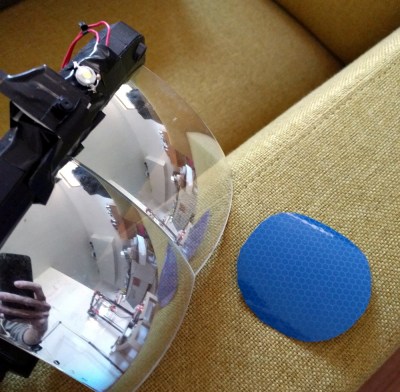

To that end, [kvtoet] relocated a VKWorld S8 smartphone’s camera module onto the front of the headset. Beyond its relatively cost, this model of phone was selected because it featured a long camera ribbon cable. With the camera on the outside of the headset, an Android application was created which periodically flashes a bright LED and looks for reflections in the camera’s feed. These reflections are then used to locate objects and markers in the real world.

In the video after the break, [kvtoet] demonstrates how this technique is put to use. The phone is able to track a retroreflector laying on the couch quickly and accurately enough that it can be used to adjust the rendering of a virtual object in real time. As the headset is moved around, it gives the impression that the wearer is actually viewing a real object from different angles and distances. With such a simplistic system the effect isn’t perfect, but it’s exciting to think of the possibilities now that this sort of technology is falling into the tinkerer’s budget.

If you don’t want to go the DIY route, Leap Motion has been teasing an open source augmented reality headset which has us quite excited. We’re still waiting on the hardware, but that hasn’t stopped hackers from coming up with some fascinating AR applications in the meantime.

Continue reading “Immersive Augmented Reality On A Budget” →