Do you remember ReBoot? If you were into early CGI, the name probably rings a bell, since when it premiered in 1994 it was the first fully computer-animated show on TV. Some time ago, a group found a pile of tapes from Mainframe Studios in Canada, the people behind ReBoot, and the computer historians amongst us were very excited… until they turned out to be digital broadcast master tapes. Exciting for fans of lost media, sure, but not quite the LTO backups of Mainframe’s SGI workstations some of us had hoped would turn up. Still, [Mark Westhaver], [Bryan Baker] and others at the “ReBoot Rewind” project have made great strides, to the point that in their latest update video they declare “We Saved ReBoot”

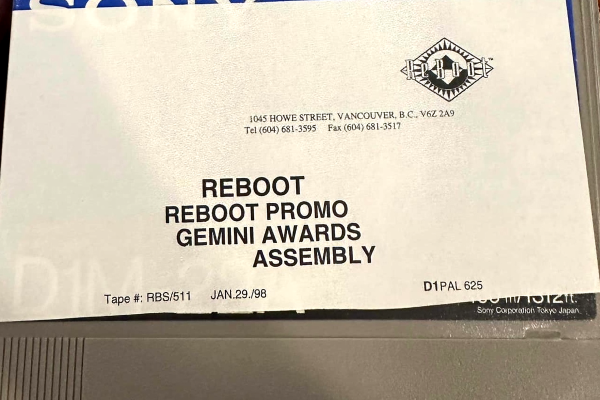

What does it take to revive a 30-year-old television project? Well, as stated, they started with the tapes. These aren’t ordinary VHS tapes: the Sony D-1 tapes, which were also known by the moniker “4:2:2”, are a format that most people who didn’t work in the TV or film industry will have never seen, and the tape decks are rare as hen’s teeth these days. Just getting a working one, and keeping it working, was one of the biggest challenges [Mark] and Reboot Rewind faced. In the end it took three somewhat-dodgy machines long past their service lives and a miraculously located spare read/write head to get a stable scanning rate.

The uncompressed digital output of these tapes isn’t something you can just burn to a DVD, either. The 720 × 576 resolution video stream is captured raw, but there are minor editing tweaks that need to be made in addition to tape errors that have cropped up over the years, and those need to be dealt with before the video and audio data gets encoded into a modern format. The video briefly glosses [Bryan Baker]’s workflow to do just that. At least they aren’t stuck with terrible USB video capture dongles VHS lovers have to deal with. Even if you don’t care about ReBoot, this isn’t the only show that was archived on D1 tapes so that workflow might be of interest to media fans.

We covered ReBoot Rewind when they were first searching for tape decks, so it’s great to have an update. Alas, the rights holders haven’t yet decided how exactly they’re going to release this fine footage, so if like this author you have fond memories of ReBoot, you may have to wait a bit longer for a reWatch.

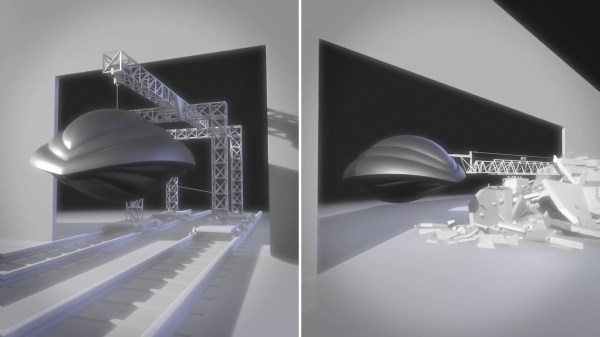

![[Mark] shows off footage from a D1 master on the repaired deck](https://hackaday.com/wp-content/uploads/2025/12/Reboot_saved_feat.png?w=600&h=450)

How was this accomplished? First of all, Winston and his team researched the correct “look” for the splash impacts by firing projectiles into mud and painstakingly working to duplicate the resulting shapes. These realistic-looking crater sculpts were then cast in some mixture of foam rubber, and given a chromed look by way of vacuum metallizing (also known as

How was this accomplished? First of all, Winston and his team researched the correct “look” for the splash impacts by firing projectiles into mud and painstakingly working to duplicate the resulting shapes. These realistic-looking crater sculpts were then cast in some mixture of foam rubber, and given a chromed look by way of vacuum metallizing (also known as