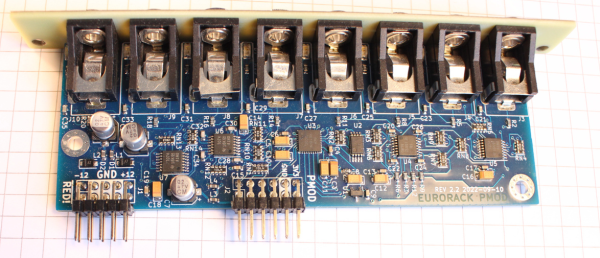

[Sebastian Holzapfel] has designed an audio frontend (eurorack-pmod) for FPGA-based audio applications, which is designed to fit into a standard Eurorack enclosure. The project, released under CERN Open-Hardware License V2, is designed in KiCAD using the AK4619VN four-channel audio codec by Asahi Kasei microdevices. (And guess what folks, there’s plenty of those in stock!) Continue reading “An Open Hardware Eurorack Compatible Audio FPGA Front End”

Arduino Does SDI Video With FPGA Help

If you are running video around your home theater, you probably use HDMI. If you are running it in a professional studio, however, you are probably using SDI, Serial Digital Interface. [Chris Brown] looks at SDI and shows a cheap SDI signal generator for an Arduino.

On the face of it, SDI isn’t that hard. In fact, [Chris] calls it “dead simple.” The problem is the bit rate which can be as high as 1.485 Gbps for the HD-SDI standard. Even for a super fast processor, this is a bit much, so [Chris] turned to the Arduino MKR Vidor 4000. Why? Because it has an FPGA onboard. Alas, the FPGA can’t do more than about 200 MHz, but that’s fast enough to drive an external Semtech GS296t2 serializer which is made to drive SDI signals.

The resulting project contains the Arduino, the serializer, a custom PCB, and both FPGA and microcontroller code. While the total cost of the project was a little under $200, that’s still better than the $350 to $2000 for a commercial SDI signal generator.

If you want to play along, the files are out on GitHub. We used the Vidor back in 2018 when it first came out. If you need a quick start on FPGAs, there’s always our boot camp.

Fixing An HP 54542C With An FPGA And VGA Display

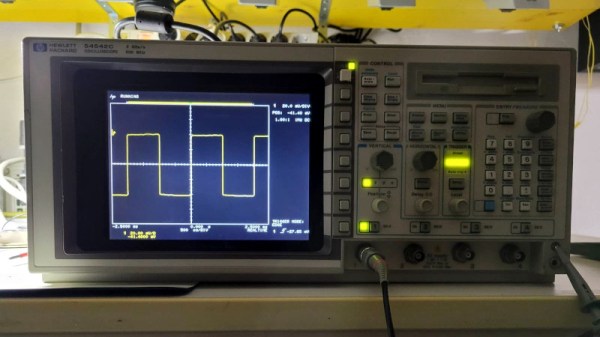

Although the HP 54542C oscilloscope and its siblings are getting on in years, they’re still very useful today. Unfortunately, as some of the first oscilloscopes to switch from a CRT display to an LCD they are starting to suffer from degradation. This has led to otherwise perfectly functional examples being discarded or sold for cheap, when all they need is just an LCD swap. This is what happened to [Alexander Huemer] with an eBay-bought 54542C.

Although this was supposed to be a fully working unit, upon receiving it, the display just showed a bright white instead of the more oscilloscope-like picture. A short while later [Alexander] was left with a refund, an apology from the seller and an HP 54542C scope with a very dead LCD. This was when he stumbled over a similar repair by [Adil Malik], right here on Hackaday. The fix? Replace the LCD with an FPGA and VGA-input capable LCD.

While this may seem counter intuitive, the problem with LCD replacements is the lack of standardization. Finding an 8″, 640×480, 60 Hz color LCD with a compatible interface as the one found in this HP scope usually gets you salvaged LCDs from HP scopes, which as [Alexander] discovered can run up to $350 and beyond for second-hand ones. But it turns out that similar 8″ LCDs are found everywhere for use as portable displays, all they need is a VGA input.

While this may seem counter intuitive, the problem with LCD replacements is the lack of standardization. Finding an 8″, 640×480, 60 Hz color LCD with a compatible interface as the one found in this HP scope usually gets you salvaged LCDs from HP scopes, which as [Alexander] discovered can run up to $350 and beyond for second-hand ones. But it turns out that similar 8″ LCDs are found everywhere for use as portable displays, all they need is a VGA input.

Taking [Adil]’s project as the inspiration, [Alexander] used an UPduino v3.1 with ICE40UP5K FPGA as the core LCD-to-VGA translation component, creating a custom PCB for the voltage level translations and connectors. One cool aspect of the whole system is that it is fully reversible, with all of the original wiring on the scope and new LCD side left intact. One niggle was that the scope’s image was upside-down, but this was fixed by putting the new LCD upside-down as well.

After swapping the original cooling fan with a better one, this old HP 545452C is now [Alexander]’s daily scope.

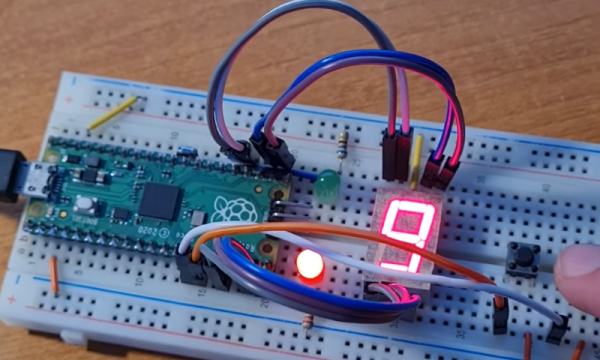

Want To Play With FPGAs? Use Your Pico!

Ever want to play with an FPGA, but don’t have the hardware? Now, if you have one of those ever-abundant Pi Picos, you can start playing with Verilog without getting an FPGA board. The FakePGA project by [tvlad1234], based on the Verilator toolkit, provides you with a way to compile Verilog into C++ for the RP2040. FakePGA even integrates RP2040 GPIOs so that they work as digital pins for the simulated GPIOs, making it a significant step up from computer-aided FPGA code simulation

[tvlad1234] provides instructions for setting this up with Linux – Windows, though untested, could theoretically run this through WSL. Maximum clock speed is 5KHz – not much, but way better than not having any hardware to test with. Everything you’d want is in the GitHub repo – setup instructions, Verilog code requirements, and a few configuration caveats to keep in mind.

We cover a lot of projects where FPGAs are used to emulate hardware of various kinds, from ISA cards to an entire Game Boy. CPU emulation on FPGAs is basically the norm — it’s just something easy to do with the kind of power that an FPGA provides. Having emulation in the opposite direction is unusual, though, we’ve seen FPGAs being emulated with FPGAs, so perhaps it was inevitable after all. Of course, if you have neither a Pico nor an FPGA, there’s always browser based emulators.

Emulate Any ISA Card With A Raspberry Pi And An FPGA

One of the reasons the IBM PC platform became the dominant standard for desktop PCs back in the mid-1980s was its open hardware design, based around what would later be called the ISA bus. Any manufacturer could design plug-in cards or even entire computers that were hardware and software compatible with the IBM PC. Although ISA has been obsolete for most purposes since the late 1990s, some ISA cards such as high-quality sound cards have become so popular among retrocomputing enthusiasts that they now fetch hundreds of dollars on eBay.

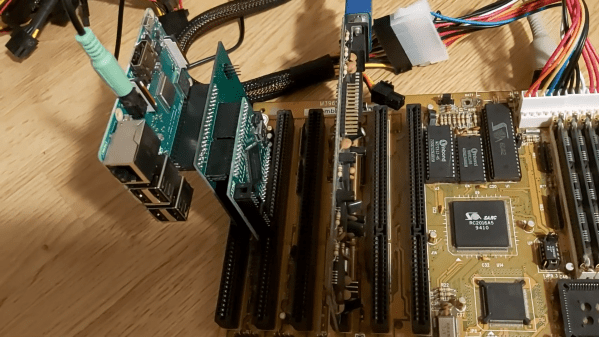

So what can you do if your favorite ISA card is not easily available? One option is to head over to [eigenco]’s GitHub page and check out his FrankenPiFPGA project. It contains a design for a simple ISA plug-in card that hooks up to a Cyclone IV FPGA and a Raspberry Pi. The FPGA connects to the ISA bus and implements its bus architecture, while the Pi communicates with the FPGA through its GPIO ports and emulates any card you want in software. [eigenco]’s current repository contains code for several sound cards as well as a hard drive and a serial mouse. The Pi’s multi-core architecture allows it to run several of these tasks at once while still keeping up the reasonably high data rate required by the ISA bus.

In the videos embedded below you can see [eigenco] demonstrating the system on a 386 motherboard that only has a VGA card to hook up a monitor. By emulating a hard drive and sound card on the Pi he is able to run a variety of classic DOS games with full sound effects and music. The sound cards currently supported include AdLib, 8-bit SoundBlaster, Gravis Ultrasound and Roland MT-32, but any card that’s documented well enough could be emulated.

This approach could also come in handy to replace other unobtanium hardware, like rare CD-ROM interfaces. Of course, you could take the concept to its logical extreme and simply implement an entire PC in an FPGA.

Continue reading “Emulate Any ISA Card With A Raspberry Pi And An FPGA”

An Open Toolchain For Sipeed Tang Nano FPGAs

[Sevan Janiyan] shares their research on putting an open FPGA toolchain together. Specifically, this is an open toolchain for the Sipeed Nano Tang FPGAs, which are relatively cheap offerings by Sipeed from China. The official toolchain is proprietary and requires you to apply for a license that’s to be renewed every year. There’s a limited educational version you can use more freely, but of course, that’s not necessarily sufficient for comfortable work.

This toolchain relies on the apicula project, an effort to reverse-engineer, reimplement and document the Gowin FPGA bitstream format, as well as the gowin integration for nextpnr (an open tool for FPGA place-and-route). With a combination of yosys, apicula, nextpnr and openFPGAloader, [Sevan] put together a set of commands you can use to build gateware for your Nano Tang FPGAs – without any proprietary limitations blocking your way. They show a basic blinkie demo, and also a demo that successfully operates a parallel LCD connected to the board.

The availability of open toolchains for FPGAs has always been somewhat of a sore point. Wondering about open FPGA toolchains? This Supercon 2019 talk by Tim [Mithro] Ansell will get you up to speed!

We thank [feinfinger (sneezing)] for sharing this with us!

Hackaday Podcast 168: Math Flattens Spheres, FPGAs Emulate Arcades, And We Can’t Shake Polaroid Pictures

Join Hackaday Editor-in-Chief Elliot Williams and Staff Writer Dan Maloney as they review the top hacks for the week. It was a real retro-fest this time, with a C64 built from (mostly) new parts, an Altoids Altair, and learning FPGAs via classic video games. We also looked at LCD sniffing to capture data from old devices, reimagined the resistor color code, revisited the magic of Polaroid instant cameras, and took a trip down television’s memory lane. But it wasn’t all old stuff — there’s flat-packing a sphere with math, spraying a fine finish on 3D printed parts, a DRM-free label printer, and a look at what’s inside that smartphone in your pocket — including some really weird optics.

Check out the links below if you want to follow along, and as always, tell us what you think about this episode in the comments below!