Some people learning the noble art of electronics find the jump from simpler tools like Fritzing to more complex ones, such as KiCAD, a little daunting, especially since they need to learn at least two tools. Fritzing is great for visualising your breadboard layout, but what if you want to start from a proper schematic, make a prototype on a breadboard and then design a custom PCB? Well, with the Kicad-breadboard plugin for (you guessed it!) KiCAD, you can now do all of this in the same tool.

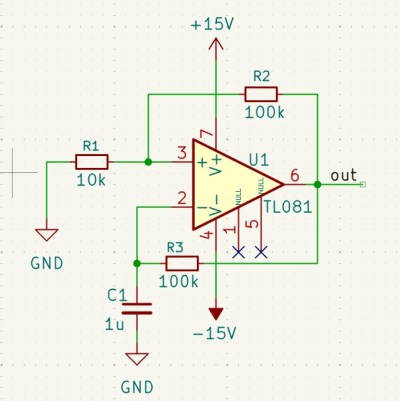

Originally designed to support EE students at the University of Antwerp, the tool presents you with a virtual breadboard with configurable size and style, along with a list of components and tools that can be placed. A few clicks and parts can be placed on the virtual breadboard with ease. Adding wires is the next logical step to make those connections that operate in the horizontal dimension. Finally, assigning power supplies and probe connections completes the process. It’s a simple enough tool to draw stuff, but drawing a layout is no use if you can’t verify it’s correctness. This is where this plugin shines: it can perform an ERC (check) between the schematic and the breadboard and flag up what you missed. Add to this that you can also perform an ERC at the schematic level, before even thinking about layout, and it’s pretty hard to make an error. Now, you can transfer this directly to a real breadboard, or even a veroboard, for more permanence once you have confidence in correctness. This will definitely save time correcting errors and help keep the magic smoke safely contained within those mysterious black rectangles.