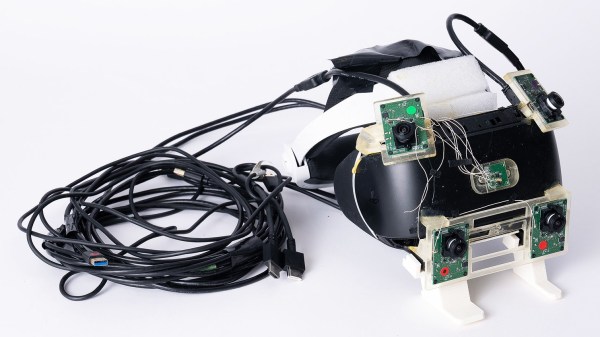

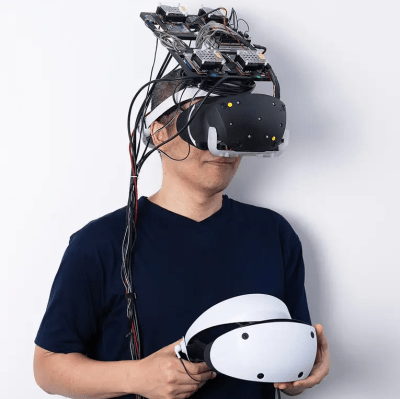

Light Fields are a subtle but critical element to making 3D video look “real”, and it has little to do with either resolution or field of view. Meta (formerly Facebook) provides a look at a prototype VR headset that provides light field passthrough video to the user for a more realistic view of their surroundings, and it uses a nifty lens and aperture combination to make it happen.

As humans move our eyes (or our heads, for that matter) to take in a scene, we see things from slightly different perspectives in the process. These differences are important cues for our brains to interpret our world. But when cameras capture a scene, they capture it as a flat plane, which is different in a number of important ways from the manner in which our eyes work. A big reason stereoscopic 3D video doesn’t actually look particularly real is because the information it presents lacks these subtleties.

Continue reading “Weird Lens Allows Light Field Passthrough For VR Headset”