Brilliant Labs have been making near-eye display platforms for some time now, and they are one of the few manufacturers making a point of focusing on an open and hacker-friendly approach to their devices. Halo is their newest smart glasses platform, currently in pre-order (299 USD) and boasting some nifty features, including a completely new approach to the display.

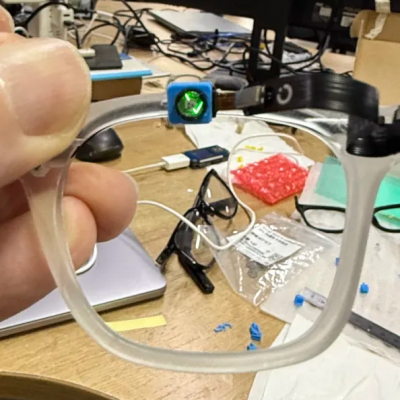

Halo is an evolution of the concept of a developer-friendly smart glasses platform intended to make experimentation (or modification) as accessible as possible. Compared to previous hardware, it has some additional sensors and an entirely new approach to the display element.

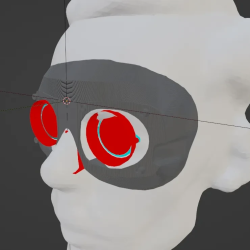

Whereas previous devices used a microdisplay and beam splitter embedded into a thick lens, Halo has a tiny display module that one looks up and into in the eyeglasses frame. The idea appears to be to provide the user with audio (bone-conduction speakers in the arms of the glasses) as well as a color “glanceable” display for visual data.

Some of you may remember Brilliant Labs’ Monocle, a transparent, self-contained, and wireless clip-on display designed with experimentation in mind. The next device was Frame, which put things into a “smart glasses” form factor, with added features and abilities.

Halo, being in pre-release, doesn’t have full SDK or hardware details shared yet. But given Brilliant Labs’ history of fantastic documentation for their hardware and software, we’re pretty confident Halo will get the same treatment. Want to know more but don’t wish to wait? Checking out the tutorials and documentation for the earlier devices should give you a pretty good idea of what to expect.