An interesting finding in fields like computer science is that much of what is advertised as new and innovative was actually pilfered from old research papers submitted to ACM and others. Which is not to say that this is necessarily a bad thing, as many of such ideas were not practical at the time. Case in point the new branch predictor in AMD’s Zen 5 CPU architecture, whose two-block ahead design is based on an idea coined a few decades ago. The details are laid out by [George Cozma] and [Camacho] in a recent article, which follows on a recent interview that [George] did with AMD’s [Mike Clark].

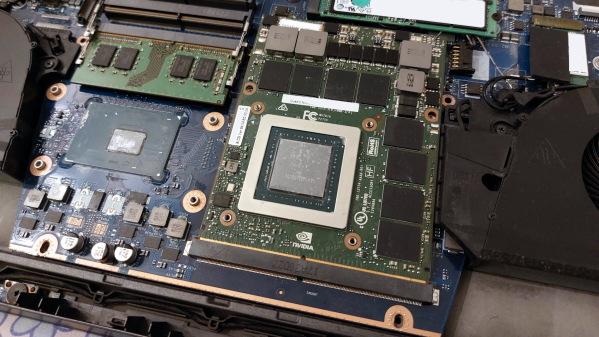

The 1996 ACM paper by [André Seznec] and colleagues titled “Multiple-block ahead branch predictors” is a good start before diving into [George]’s article, as it will help to make sense of many of the details. The reason for improving the branch prediction in CPUs is fairly self-evident, as today’s heavily pipelined, superscalar CPUs rely heavily on branch prediction and speculative execution to get around the glacial speeds of system memory once past the CPU’s speediest caches. While predicting the next instruction block after a branch is commonly done already, this two-block ahead approach as suggested also predicts the next instruction block after the first predicted one.

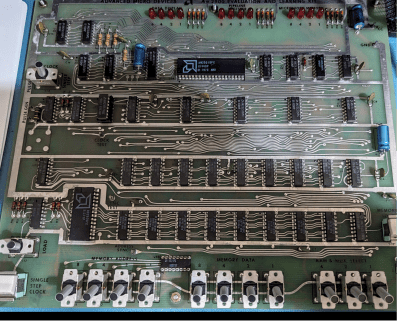

Perhaps unsurprisingly, this multi-block ahead branch predictor by itself isn’t the hard part, but making it all fit in the hardware is. As described in the paper by [Seznec] et al., the relevant components are now dual-ported, allowing for three prediction windows. Theoretically this should result in a significant boost in IPC and could mean that more CPU manufacturers will be looking at adding such multi-block branch prediction to their designs. We will just have to see how Zen 5 works once released into the wild.