Hobbyist electronics and robotics are getting cheaper and easier to build as time moves on, and one advantage of that is the possibility of affordable prosthetics. A great example is this transhumeral prosthesis from [Duy], his entry for this year’s Hackaday Prize.

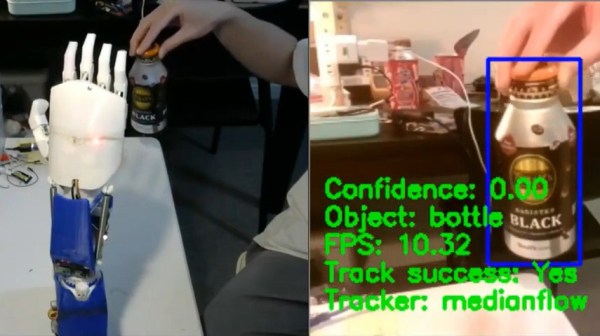

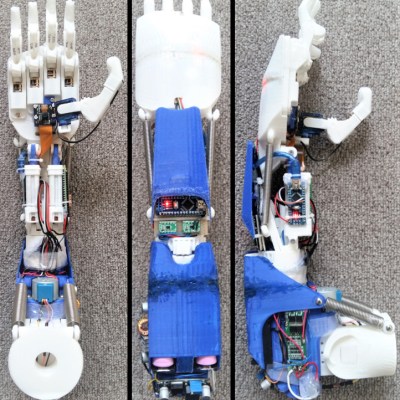

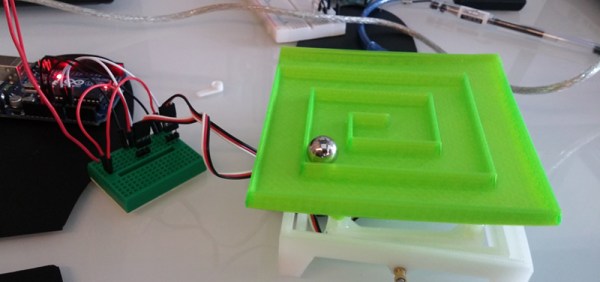

With ten degrees of freedom, including individual fingers, two axes for the thumb and enough wrist movement for the hand to wave with, this is already a pretty impressive robotics build in and of itself. The features don’t stop there however. The entire prosthesis is modular and can be used in different configurations, and it’s all 3D printed for ease of customization and manufacturing. Along with the myoelectric sensor which is how these prostheses are usually controlled, [Duy] also designed the hand to be controlled with computer vision and brain-controlled interfaces.

With ten degrees of freedom, including individual fingers, two axes for the thumb and enough wrist movement for the hand to wave with, this is already a pretty impressive robotics build in and of itself. The features don’t stop there however. The entire prosthesis is modular and can be used in different configurations, and it’s all 3D printed for ease of customization and manufacturing. Along with the myoelectric sensor which is how these prostheses are usually controlled, [Duy] also designed the hand to be controlled with computer vision and brain-controlled interfaces.

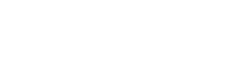

The palm of the hand has a camera embedded in it, and by passing that feed through CV software the hand can recognize and track objects the user moves it close to. This makes it easier to grab onto them, since the different gripping patterns required for each object can be programmed into the Raspberry Pi controlling the actuators. Because the alpha-wave BCI may not offer enough discernment for a full range of movement of each finger, this is where computer aid can help the prosthesis feel more natural to the user.

We’ve seen a fair amount of creative custom prostheses here, like this one which uses AI to allow the user to play music with it, and this one which gives its user a tattoo machine for an appendage.

Continue reading “3D Printed Prosthesis Reads Your Mind, Sees With Its Hand” →