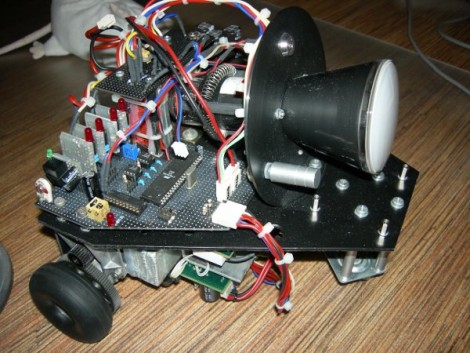

Culture Shock II, a robot by the Lawrence Tech team, first caught our eye due to its unique drive train. Upon further investigation we found a very well built robot with a ton of unique features.

The first thing we noticed about CultureShockII are the giant 36″ wheels. The wheel assemblies are actually unicycles modified to be driven by the geared motors on the bottom. The reason such large wheels were chosen was to keep the center of gravity well below the axle, providing a very self stabilizing robot. The robot also has two casters with a suspension system to act as dampers and stabilizers in the case of shocks and inclines. Pictured Below. Continue reading “Intelligent Ground Vehicle Competition 2010 Day Two Report”