The Quest 2 wireless VR headset by Oculus was recently released, and improves on the one-and-a-half year old Quest mainly in terms of computing power and screen resolution. But Oculus is owned by Facebook, a fact that Facebook is increasingly keen on making very clear. The emerging scene is one that looks familiar: a successful hardware device, and a manufacturer that wants to keep users in a walled garden while fully controlling how the device can be used. Oculus started out very differently, but the writing has been on the wall for a while. Rooting and jailbreaking the Quest 2 seems inevitable, but what will happen then? Continue reading “As Facebook Tightens Their Grip On VR, Jailbreaking Looks More Likely”

vr146 Articles

Open Source VR Headset For $200

We’ve seen homemade VR headsets before, but, often they are dependent on special software or are not really up to par with commercial products. Not so with Relativity, an open source project from [Max Coutte] and [Gabriel Combe]. [Max] says it best:

Relativty is not a consumer product. We made Relativty in my bedroom with a soldering iron and a 3D printer and we expect you to do the same: build it yourself.

Unlike some homebrew gear, Relativity has full Steam VR support. It also has experimental support for positional scaling that tracks your body based on video input.

Want To Support Hacker-friendly Hardware Design? Follow Valve’s Example

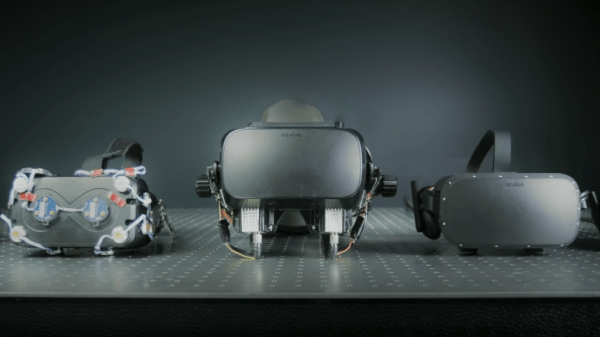

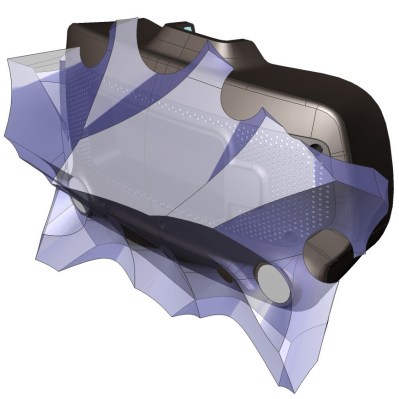

It’s been just over a year since Valve released Index, their flagship VR system, and it’s worth looking back at this GitHub repository as a fine example of how to provide supporting materials to a hacker-friendly hardware design. The image above shows off one of the hacker-friendly design elements: an empty space behind the visor, with a USB port off to the right, that exists for no reason other than to make it easier to mount and plug in whatever one might come up with. There’s more to it than that, however. If one wishes to provide supporting materials for a hardware design, one could certainly do worse than emulate Valve’s example.

The hardware repository contains not just CAD models of mod-friendly hardware pieces (both in high-resolution STEP models as well as STL files) but also 3D models of the sensor zones, so modders can ensure they avoid occluding any sensors with their creations. Examples are great, and one provided by Valve is the Booster; a hand controller add-on providing extra comfort for people with large hands or long thumbs. The model also doubles as a reference for designing attachments that will not interfere with any of the tracking or touch-sensitive surfaces of the controllers.

Being hacker-friendly doesn’t mean the hardware has no warranty, but it does mean that there is concrete guidance on what does or doesn’t risk voiding it. In the case of the Index hardware, the guidance is simple: “Anything that requires a T5 or smaller is not user serviceable.”

To us, the whole attitude of being hacker-friendly is exemplified by a statement about the headstrap, found about half-way down the page. The words “removing the headstrap is not recommended” are followed immediately by clear directions on how to do exactly that, demonstrating the kind of trust necessary to reduce barriers for add-ons and modifications. That is a great way to help foster experimentation, like this project for 1:1 mapping of physical elements to their VR counterparts, to make awesome spaceship cockpits.

Gaze Inside The Valve Index VR Headset In Detailed Teardown

Want to see what exactly is inside the $500 (headset only price) Valve Index VR headset that was released last summer? Take a look at this teardown by [Ilja Zegars]. Not only does [Ilja] pull the device apart, but he identifies each IC and takes care to point out some of the more unique hardware aspects like the fancy diffuser on the displays, and the unique multilayered lenses (which are much thinner than one might expect.)

[Ilja] is no stranger to headset hardware design, and in addition to all the eye candy of high-res photographs, provides some insightful commentary to help make sense of them. The “tracking webs” pulled from the headset are an interesting bit, each is a long run of flexible PCB that connects four tracking sensors for each side of the head-mounted display back to the main PCB. These sensors are basically IR photodiodes, and detect the regular laser sweeps emitted by the base stations of Valve’s lighthouse tracking technology. [Ilja] also gives us a good look at the rod and spring mechanisms seen above that adjust distance between the two screens.

Want more? [Ilja] also has a gallery of high-resolution images available for those you who fancy a closer look. Also, if you missed it, we covered an examination of the Index’s optical design as part of everything you probably didn’t know about field of view in head-mounted displays.

[via Twitter]

See The Science Behind VR Display Design, And What Makes A Problem Important

VR headsets are more and more common, but they aren’t perfect devices. That meant [Douglas Lanman] had a choice of problems to address when he joined Facebook Reality Labs several years ago. Right from the start, he perceived an issue no one seemed to be working on: the fact that the closer an object in VR is to one’s face, the less “real” it seems. There are several reasons for this, but the general way it presents is that the closer a virtual object is to the viewer, the more blurred and out of focus it appears to be. [Douglas] talks all about it and related issues in a great presentation from earlier this year (YouTube video) at the Electronic Imaging Symposium that sums up the state of the art for VR display technology while giving a peek at the kind of hard scientific work that goes into identifying and solving new problems.

[Douglas] chose to address seemingly-minor aspects of how the human eye and brain perceive objects and infer depth, and did so for two reasons: one was that no good solutions existed for it, and the other was that it was important because these cues play a large role in close-range VR interactions. Things within touching or throwing distance are a sweet spot for interactive VR content, and the state of the art wasn’t really delivering what human eyes and brain were expecting to see. This led to years of work on designing and testing varifocal and multi-focal displays which, among other things, were capable of presenting images in a variety of realistic focal planes instead of a single flat one. Not only that, but since the human eye expects things that are not in the correct focal plane to appear blurred (which is itself a depth cue), simulating that accurately was part of things, too.

The entire talk is packed full of interesting details and prototypes. If you have any interest in VR imaging and headset design and have a spare hour, watch it in the video embedded below.

Continue reading “See The Science Behind VR Display Design, And What Makes A Problem Important”

Aggressive Indoor Flying Thanks To SteamVR

With lockdown regulations sweeping the globe, many have found themselves spending altogether too much time inside with not a lot to do. [Peter Hall] is one such individual, with a penchant for flying quadcopters. With the great outdoors all but denied, he instead endeavoured to find a way to make flying inside a more exciting experience. We’d say he’s succeeded.

The setup involves using a SteamVR virtual reality tracker to monitor the position of a quadcopter inside a room. This data is then passed back to the quadcopter at a high rate, giving the autopilot fast, accurate data upon which to execute manoeuvres. PyOpenVR is used to do the motion tracking, and in combination with MAVProxy, sends the information over MAVLink back to the copter’s ArduPilot.

While such a setup could be used to simply stop the copter crashing into things, [Peter] doesn’t like to do things by half measures. Instead, he took full advantage of the capabilities of the system, enabling the copter to fly aggressively in an incredibly small space.

It’s an impressive setup, and one that we’re sure could have further applications for those exploring the use of drones indoors. We’ve seen MAVLink used for nefarious purposes, too. Video after the break.

Continue reading “Aggressive Indoor Flying Thanks To SteamVR”

DIYing A VR Headset For Cheap

VR has been developing rapidly over the past decade, but headsets and associated equipment remain expensive. Without a killer app, the technology has yet to become ubiquitous in homes around the world. Wanting to experiment without a huge investment, [jamesvdberg] whipped up a low-cost headset for under $100 USD.

The build relies on Google-Cardboard-style optics, which are typically designed to work with a smartphone as the display. Instead, an 800×480 display intended for use with the Raspberry Pi is installed, hooked up over HDMI. An MPU6050 IMU is then installed to monitor the headset’s movements, hooked up to an Arduino Micro that passes this information to the attached PC. The rest of the build simply consists of cable management and power supply to all the hardware. It’s important to get this right, so that one doesn’t get tangled up by the umbilical when playing.

While it won’t outperform a commercial unit, the device nevertheless offers stereoscopic VR at a low cost. For a very cheap and accessible VR experience that’s compatible with the PC, it’s hard to beat. Others have done similar work too. Video after the break.