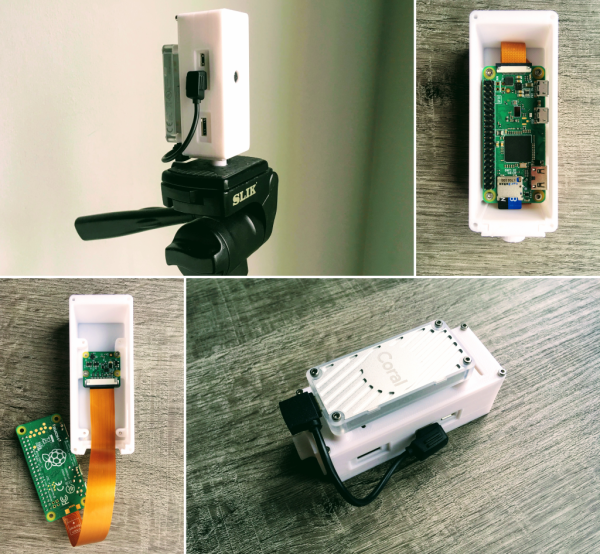

As machine learning and artificial intelligence becomes more widespread, so do the number of platforms available for anyone looking to experiment with the technology. Much like the single board computer revolution of the last ten years, we’re currently seeing a similar revolution with the number of platforms available for machine learning. One of those is Google Coral, a set of hardware specifically designed to take advantage of this new technology. It’s missing support to work with certain hardware though, so [Ricardo] set out to get one working with a Raspberry Pi Zero with this smart camera build based around Google Coral.

The project uses a Google Coral Edge TPU with a USB accelerator as the basis for the machine learning. A complete image for the Pi Zero is available which sets most of the system up right away including headless operation and includes a host of machine learning software such as OpenCV and pytesseract. By pairing a camera to the Edge TPU and the Raspberry Pi, [Ricardo] demonstrates many of its machine learning capabilities with several example projects such as an automatic license plate detector and even a mode which can recognize whether or not a face mask is being worn, and even how correctly it is being worn.

For those who want to get into machine learning and artificial intelligence, this is a great introductory project since the cost to entry is so low using these pieces of hardware. All of the project code and examples are available on [Ricardo]’s GitHub page too. We could even imagine his license plate recognition software being used to augment this license plate reader which uses a much more powerful camera.