As difficult as it is for a human to learn ambidexterity, it’s quite easy to program into a humanoid robot. After all, a robot doesn’t need to overcome years of muscle memory. Giving a one-handed robot ambidexterity, however, takes some more creativity. [Kelvin Gonzales Amador] managed to do this with his ambidextrous robot hand, capable of signing in either left- or right-handed American Sign Language (ASL).

The essential ingredient is a separate servo motor for each joint in the hand, which allows each joint to bend equally well backward and forward. Nothing physically marks one side as the palm or the back of the hand. To change between left and right-handedness, a servo in the wrist simply turns the hand 180 degrees, the fingers flex in the other direction, and the transformation is complete. [Kelvin] demonstrates this in the video below by having the hand sign out the full ASL alphabet in both the right and left-handed configurations.

The tradeoff of a fully direct drive is that this takes 23 servo motors in the hand itself, plus a much larger servo for the wrist joint. Twenty small servo motors articulate the fingers, and three larger servos control joints within the hand. An Arduino Mega controls the hand with the aid of two PCA9685 PWM drivers. The physical hand itself is made out of 3D-printed PLA and nylon, painted gold for a more striking appearance.

This isn’t the first language-signing robot hand we’ve seen, though it does forgo the second hand. To make this perhaps one of the least efficient machine-to-machine communication protocols, you could also equip it with a sign language translation glove.

robot hand15 Articles

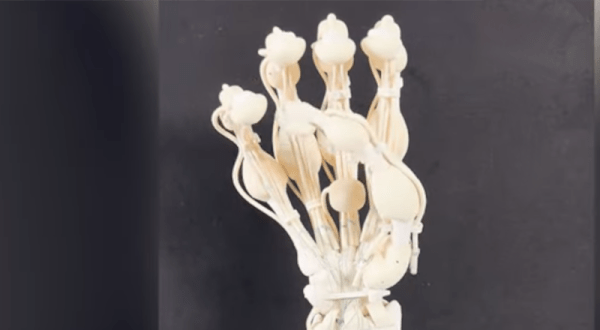

Robot Hand Has Good Bones

What do you get when you mix rigid and elastic polymers with a laser-scanning 3D printing technique? If you are researchers at ETH Zurich, you get robot hands with bones, ligaments, and tendons. In conjunction with a startup company, the process uses both fast-curing and slow-curing plastics, allowing parts with different structural properties to print. Of course, you could always assemble things from multiple kinds of plastics, but this new technique — vision-controlled jetting — allows the hands to print as one part. You can read the full paper from Nature or see the video below.

Wax with a low melting point encases the entire structure, acting as a support. The researchers remove the wax after the plastics cure.

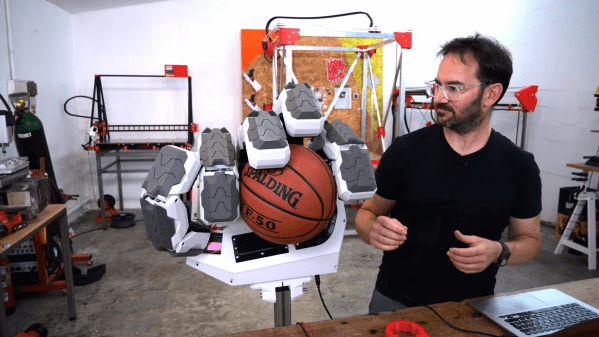

Big 3D Printed Hand Uses Big Servos, Naturally

[Ivan Miranda] isn’t afraid to dream big, and hopes to soon build a 3D printed giant robot he can ride around on. As the first step towards that goal, he’s built a giant printed hand big enough to hold a basketball.

The hand has fingers with several jointed segments, inspired by those wooden hand models sold as home decor at IKEA. The fingers are controlled via a toothed belt system, with two beefy 11 kg servos responsible for flexing each individual finger joint. A third 25 kg servo flexes the finger as a whole. [Ivan] does a good job of hiding the mechanics and wiring inside the structure of the hand itself, making an attractive robot appendage.

As with many such projects, control is where things get actually difficult. It’s one thing to make a robot hand flex its fingers in and out, and another thing to make it move in a useful, coordinated fashion. Regardless, [Ivan] is able to have the hand grip various objects, in part due to the usefulness of the hand’s opposable thumb. Future plans involve adding positional feedback to improve the finesse of the control system.

Building a good robot hand is no mean feat, and it remains one of the challenges behind building capable humanoid robots. Video after the break.

Continue reading “Big 3D Printed Hand Uses Big Servos, Naturally”

Silicone-Slapping Servos Solve Simon Says

Most modern computer games have a clearly-defined end, but many classics like Pac-man and Duck Hunt can go on indefinitely, limited only by technical constraints such as memory size. One would think that the classic electronic memory game Simon should fall into that category too, but with most humans struggling even to reach level 20 it’s hard to be sure. [Michael Schubart] was determined to find out if there was in fact an end to the latest incarnation of Simon and built a robot to help him in his quest.

The Simon Air, as the newest version is known, uses motion sensors to detect hand movements, enabling no-touch gameplay. [Michael] therefore made a system with servo-actuated silicone hands that slap the motion sensors. The tone sequence generated by the game is detected by light-dependent resistors that sense which of the segments lights up; a Raspberry Pi keeps track of the sequence and replays it by driving the servos.

The Simon Air, as the newest version is known, uses motion sensors to detect hand movements, enabling no-touch gameplay. [Michael] therefore made a system with servo-actuated silicone hands that slap the motion sensors. The tone sequence generated by the game is detected by light-dependent resistors that sense which of the segments lights up; a Raspberry Pi keeps track of the sequence and replays it by driving the servos.

We won’t spoil the ending, but [Michael] did find an answer to his question. An earlier version of the game was already examined with the help of an Arduino, although it apparently wasn’t fast enough to drive the game to its limits. If you think Simon can be improved you can always roll your own, whether from scratch or by hacking an existing toy.

Continue reading “Silicone-Slapping Servos Solve Simon Says“

Taking A Stroll Down Uncanny Valley With The Artificial Muscle Robotic Arm

Wikipedia says “The uncanny valley hypothesis predicts that an entity appearing almost human will risk eliciting cold, eerie feelings in viewers.” And yes, we have to admit that as incredible as it is, seeing [Automaton Robotics]’ hand and forearm move in almost human fashion is a bit on the disturbing side. Don’t just take our word for it, let yourself be fascinated and weirded out by the video below the break.

While the creators of the Artificial Muscles Robotic Arm are fairly quiet about how it works, perusing through the [Automaton Robotics] YouTube Channel does shed some light on the matter. The arm and hand’s motion is made possible by artificial muscles which themselves are brought to life by water pressurized to 130 PSI (9 bar). The muscles themselves appear to be a watertight fiber weave, but these details are not provided. Bladders inside a flexible steel mesh, like finger traps?

[Automaton Robotics]’ aim is to eventually create a humanoid robot using their artificial muscle technology. The demonstration shown is very impressive, as the hand has the strength to lift a 7 kg (15.6 lb) dumbbell even though some of its strongest artificial muscles have not yet been installed.

A few years ago we ran a piece on Artificial Muscles which mentions pneumatic artificial muscles that contract when air pressure is applied, and it appears that [Automaton Robotics] has employed the same method with water instead. What are your thoughts? Please let us know in the comments below. Also, thanks to [The Kilted Swede] for this great tip! Be sure to send in your own tips, too!

Continue reading “Taking A Stroll Down Uncanny Valley With The Artificial Muscle Robotic Arm”

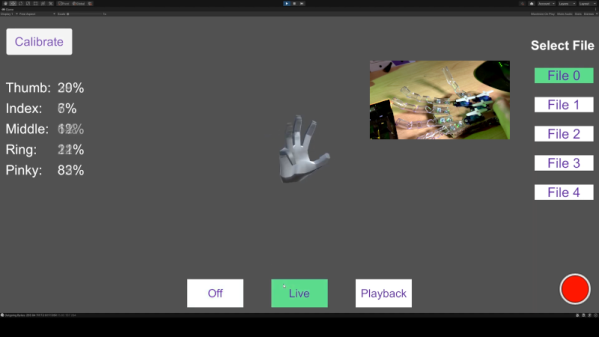

Leap Motion Controls Hands With No Glove

It isn’t uncommon to see a robot hand-controlled with a glove to mimic a user’s motion. [All Parts Combined] has a different method. Using a Leap Motion controller, he can record hand motions with no glove and then play them back to the robot hand at will. You can see the project in the video, below.

The project seems straightforward enough, but apparently, the Leap documentation isn’t the best. Since he worked it out, though, you might find the code useful.

An 8266 runs everything, although you could probably get by with less. The Leap provides more data than the hand has servos, so there was a bit of algorithm development.

We picked up a few tips about building flexible fingers using heated vinyl tubing. Never know when that’s going to come in handy — no pun intended. The cardboard construction isn’t going to be pretty, but a glove cover works well. You could probably 3D print something, too.

The Unity app will drive the hand live or can playback one of the five recorded routines. You can see how the record and playback work on the video.

This reminded us of another robot hand project, this one 3D printed. We’ve seen more traditional robot arms moving with a Leap before, too. Continue reading “Leap Motion Controls Hands With No Glove”

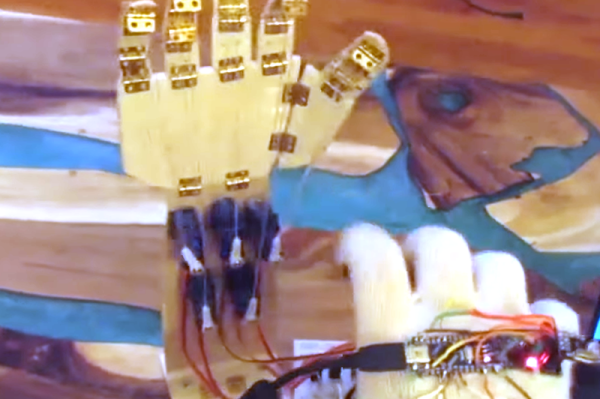

Haptic Glove Controls Robot Hand Wirelessly

[Miller] wanted to practice a bit with some wireless modules and wound up creating a robotic hand he could teleoperate with the help of a haptic glove. It lookes highly reproducible, as you can see the video, below the break.

The glove uses an Arduino’s analog to digital converter to read some flex sensors. Commercial flex sensors are pretty expensive, so he experimented with some homemade sensors. The ones with tin foil and graphite didn’t work well, but using some bent can metal worked better despite not having good resolution.

Continue reading “Haptic Glove Controls Robot Hand Wirelessly”