Generally, when we’re looking to build something that moves we reach for motors, servos, or steppers — which ultimately are all just variations on the same concept. But there are other methods of locomotion available. As [Jamie Matthews] demonstrates, Nitinol wires can be another way to help get things moving.

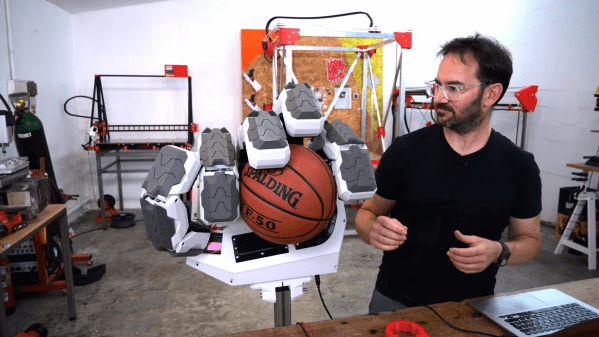

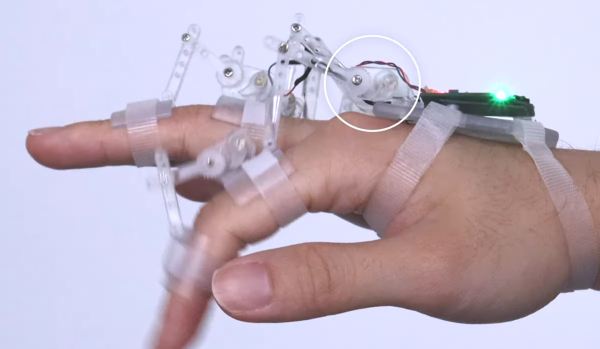

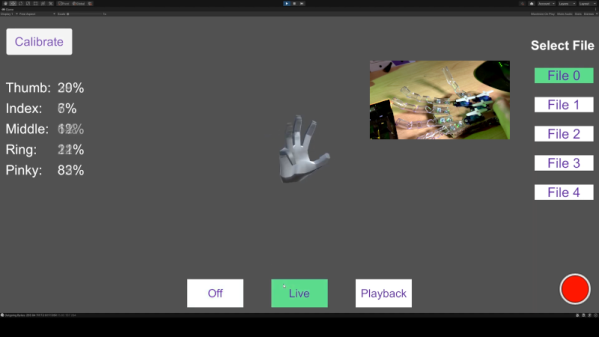

Nitinol is a type of metal wire made of nickel and titanium that is also known as “memory wire”, because it can remember its former shape and transition back to it with a temperature change. [Jamie] uses this property to create a simple hand that is actuated by pieces of wire sourced from Amazon. This is actually a neat way to go, as it goes some way to mimicking how our own hands are moved by our tendons.

[Jamie] does a great job of explaining how to get started with Nitinol and how it works in a practical sense. We’ve seen it put to some wacky uses before, too, such as the basis for an airless tire.