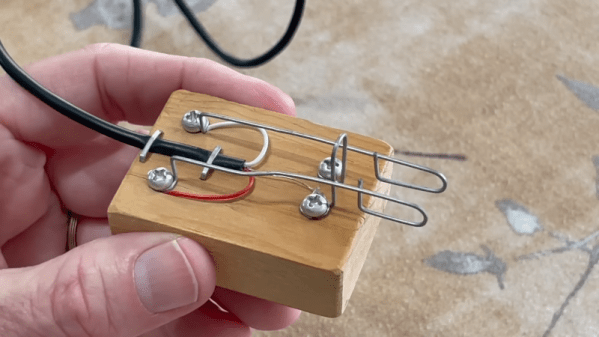

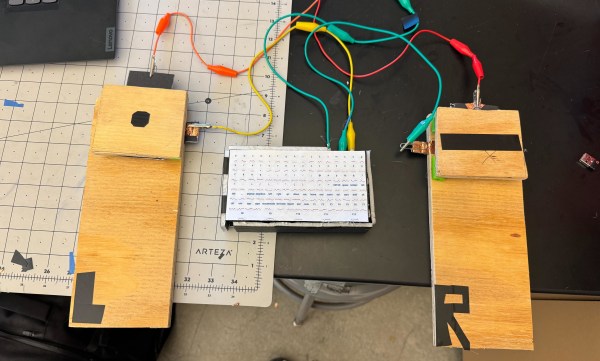

If you don’t know Morse code, you probably think of a radio operator using a “key” to send Morse code. These were — and still are — used. They are little more than a switch built to be comfortable in your hand and spring loaded so the switch makes when you push down and breaks when you let up. Many modern operators prefer using paddles along with an electronic keyer, but paddles can be expensive. [N1JI] didn’t pay much for his, though. He took paperclips, a block of wood, and some other scrap bits and made his own paddles. You can see the results in the video below.

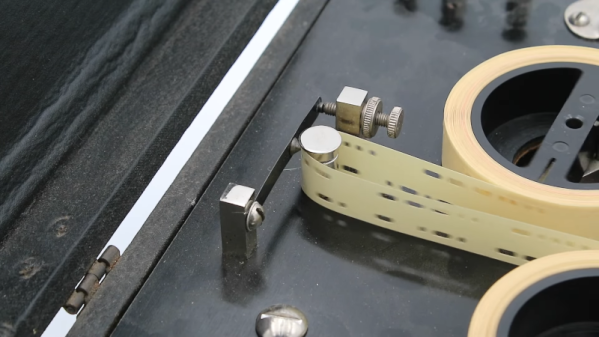

When you use a key, you are responsible for making the correct length of dits and dahs. Fast operators eventually moved to a “bug,” which is a type of paddle that lets you push one way or another to make a dash (still with your own sense of timing). However, if you push the other way, a mechanical oscillator sends a series of uniform dots for as long as you hold the paddle down.

Modern paddles tend to work with electronic “iambic” keyers. Like a bug, you push one way to make dots and the other way to make dashes. However, the dashes are also perfectly timed, and you can squeeze the paddle to make alternating dots and dashes. It takes a little practice, but it results in a more uniform code, and most people can send it faster with a “sideswiper” than with a straight key.