Although plenty of us have our preferred language for coding, whether it’s C for its hardware access, Python for its usability, or Fortran for its mathematic prowess, not every language is specifically built for problem solving of a particular nature. Some are built as thought experiments or challenges, like Whitespace or Chicken but aren’t used for serious programming. There are a few languages that fit in the gray area between these regions, and one example of this is MOUSE, which can now be run on an Arduino.

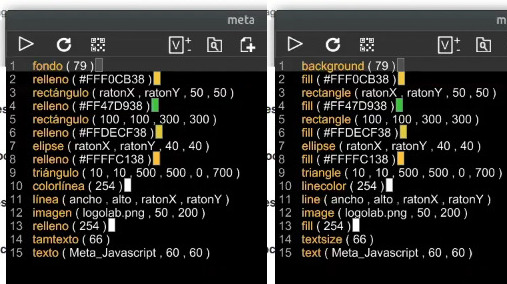

Although MOUSE was originally meant to be a minimalist language for computers of the late 70s and early 80s with limited memory (even for the era), its syntax looks more like a more modern esoteric language, and indeed it arguably would take a Python developer a bit of time to get used to it in a similar way. It’s stack-based, for a start, and also uses Reverse Polish Notation for performing operations. The major difference though is that programs process single letters at a time, with each letter corresponding to a specific instruction. There have been some changes in the computing world since the 80s, though, so [Ivan]’s version of MOUSE includes a few changes that make it slightly different than the original language, but in the end he fits an interpreter, a line editor, graphics primitives, and peripheral drivers into just 2 KB of SRAM and 32 KB Flash so it can run on an ATmega328P.

There are some other features here as well, including support for PS/2 devices, video output, and the ability to save programs to the internal EEPROM. It’s an impressive setup for a language that doesn’t get much attention at all, but certainly one that threads the needle between usefulness and interesting in its own right. Of course if a language where “Hello world” is human-readable is not esoteric enough, there are others that may offer more of a challenge.

Image Credit: Maxbrothers2020