When I was a kid we used to go to a place we just called “The Book Barn.” It was pretty descriptive, as it was just a barn filled with old books. It smelled pretty much like you’d expect a barn filled with old books to smell, and it was a fantastic place to browse — all of the charm of an old library with none of the organization. On one visit I found a stack of old magazines, including a couple of Popular Mechanics from the late 1940s. The cover art always looked like pulp science fiction, with a pipe-smoking father coming home from work to his suburban home in a flying car.

But the issue that caught my eye had a cover showing a couple of rugged men in a Jeep, bouncing around the desert with a Geiger counter. “Build your own uranium detector,” the caption implored, suggesting that the next gold rush was underway and that anyone could get in on the action. The world was a much more optimistic place back then, looking forward as it was to a nuclear-powered future with electricity “too cheap to meter.” The fact that sudden death in an expanding ball of radioactive plasma was potentially the other side of that coin never seemed to matter that much; one tends to abstract away realities that are too big to comprehend.

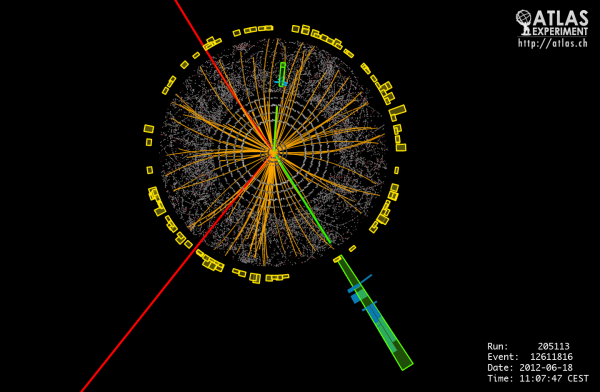

Things are more complicated now, but uranium remains important. Not only is it needed to build new nuclear weapons and maintain the existing stockpile, it’s also an important part of the mix of non-fossil-fuel electricity options we’re going to need going forward. And getting it out of the ground and turned into useful materials, including its radioactive offspring plutonium, is anything but easy.

Continue reading “Mining And Refining: Uranium And Plutonium”